Business Intelligence

SQL + Python in one notebook: why analysts at fast-growing startups are switching

Unify SQL and Python in reactive notebooks to speed analysis, keep metrics consistent, and query live warehouse data with AI assistance.

Startups are ditching outdated workflows. Analysts waste time switching between SQL editors, Python IDEs, and visualization tools, leading to inefficiency and frustration. Combined SQL-Python notebooks solve this by unifying these tasks into a single platform, making analysis faster and more collaborative.

Key Takeaways:

Time Saved: No more exporting CSVs or juggling tools. Query, analyze, and visualize in one place.

Improved Collaboration: Shared metrics and live updates ensure teams work with consistent, real-time data.

Scalability: Handles growing datasets directly in data warehouses like Snowflake or BigQuery.

AI Assistance: Generates SQL and Python code from plain English, speeding up analysis.

Governance: Centralized metric definitions eliminate discrepancies across teams.

Querio is leading this shift. By combining SQL, Python, and AI in one workspace, it streamlines analytics, reduces errors, and accelerates decision-making. For startups, this means faster insights and fewer headaches as they grow.

Problems with Separate SQL and Python Tools

Switching Between Tools Wastes Time

Analysts spend countless hours every week juggling between SQL editors and Python IDEs. Each switch isn’t just a change of windows - it disrupts their focus and derails the flow of analysis. At Meta, for instance, 90% of data scientists and engineers relied on traditional SQL-based tools before unified notebooks became an option [5].

The process of moving data between these tools is a constant source of frustration. You run a query in SQL, export the results as a CSV, and then import that file into Python. Or you end up writing repetitive boilerplate code with SQLAlchemy just to get your data into a dataframe [7][2]. Instead of analyzing data, you’re stuck doing tedious "data plumbing."

And let’s not forget the nightmare of dealing with dependency conflicts and version mismatches, often dubbed "environment hell" [1]. Hours that could be spent solving business problems are wasted fixing library installations across machines. This constant back-and-forth between tools doesn’t just slow down individuals - it drags down the entire team’s productivity.

Team Collaboration Breaks Down

When teams rely on separate tools, maintaining consistent metrics becomes a major challenge. SQL, Python, and notebooks often store data separately, leading to discrepancies. For example, one analyst might calculate monthly revenue in SQL, while another does it in Python, only to end up with conflicting results [8].

Version control adds another layer of complexity. Since notebooks are stored as JSON files, tracking changes or merging work in Git is a headache. In some cases, this can even result in lost code [6].

Security risks also rise. Once data leaves a secure SQL environment and is processed in local Python instances, enforcing Access Control Lists becomes nearly impossible. This increases the chance of accidental data leaks. Additionally, when analysts share their results as static snapshots, the data doesn’t update automatically. Without manual re-runs, teams risk working with outdated or misleading results [5]. These gaps in collaboration only grow as teams expand and data volumes increase.

Scaling Becomes Harder

The inefficiencies from tool switching and inconsistent workflows become even more pronounced as organizations grow. What works for a small team of five becomes unsustainable for a team of twenty.

Traditional notebooks are limited by local CPU and memory, which quickly becomes a bottleneck as datasets grow. For example, tools like Pandas load entire datasets into memory, often leading to out-of-memory errors that bring analysis to a halt [9]. While SQL databases can handle massive datasets, their potential is wasted when data constantly needs to be transferred between systems.

Early architectural decisions can turn into costly problems later. What starts as a minor inconvenience - like copying data between tools - can snowball into a bottleneck that slows down critical decision-making [8]. For growing teams, these inefficiencies can become major obstacles.

Why Analysts Are Moving to Combined SQL-Python Notebooks

End-to-End Analysis in One Place

The move to combined SQL-Python notebooks is all about cutting out inefficiencies. Analysts can now write SQL queries and immediately transfer the results into a Python dataframe for cleaning, analysis, and modeling - all within the same interface [1][2].

This integrated workflow keeps analysts focused, allowing them to seamlessly move from data extraction to visualization without needing to switch between multiple tools [1][2].

What’s more, modern notebooks come pre-equipped with essential libraries like Pandas, Scikit-learn, and DuckDB. This eliminates the hassle of debugging dependency issues and lets analysts concentrate on uncovering insights [1].

"It's a lot easier (and faster) to query, visualize, and contextualize in a single place rather than switch back and forth between different tools" [2].

These efficient workflows lay the foundation for advanced features that further enhance an analyst's capabilities.

Features That Matter Most

Modern notebooks go beyond smooth workflows by introducing features that reshape how analysts work. One standout is reactive execution, where notebooks automatically update computations when underlying data or dependencies change [3].

The integration between SQL and Python has also taken a leap forward. Analysts can now embed Python variables and functions directly into SQL queries, enabling more advanced and dynamic business logic [2][10].

AI-assisted query generation has become another game-changer. Tools now follow a "review-first" model, requiring human approval for suggested code before execution. This reduces the risk of silent data corruption or accidental execution of production queries [1]. In fact, the widespread use of AI tools like GitHub Copilot - adopted by 62% of developers in 2024 - has set a new standard for what analysts expect from their tools [3].

Industry Adoption in 2025-2026

The demand for faster, integrated analytics has driven widespread adoption of these unified notebooks, particularly among startups. A 2023 survey revealed that 68% of career analysts use SQL and Python together, with over 90% of data professionals relying on Python and 53% using SQL as their primary query language [11].

The rise of "SQL-first" notebooks has been a key trend through 2025 and into 2026. These notebooks treat SQL as a core feature, offering advanced editors with capabilities like autocomplete, linting, and schema exploration alongside Python. Startups have embraced these tools because they address critical pain points: 64% of data professionals cite technical debt as a top frustration, and 92% of executives report difficulties scaling AI initiatives due to mismatched tools and workflows [2][4].

How Querio Combines SQL and Python in One Workspace

AI-Powered Analytics Workspace

Querio takes the complexity out of data analysis by transforming plain-English questions into SQL and Python code that analysts can inspect and tweak. For example, if someone asks, "What's our monthly revenue trend for Q1 2026?", the platform produces optimized queries, breaking down each execution step for transparency. This is especially crucial for startups, where precise data drives critical decisions.

The platform's metrics layer vs semantic layer is a game-changer. Instead of letting each analyst define metrics like "Active Users" or "Monthly Recurring Revenue" in their own way, Querio enforces consistent, pre-approved definitions created by the data team. This consistency ensures everyone is speaking the same "data language." Startups using Querio have reported cutting query development time by two-thirds, reducing the process from 30 minutes to just 10.

"Querio lets us ship analyses 5x faster without sacrificing governance." – Alex Liu, Head of Analytics, Brex (2026 case study)

In addition to speeding up workflows, Querio connects directly to data warehouses for real-time insights.

Direct Warehouse Connections

Querio integrates seamlessly with major data warehouses like Snowflake, BigQuery, and Redshift (as opposed to a data warehouse vs data lake setup), running queries directly on their infrastructure. This eliminates the need for data duplication and ensures analysts always work with the most up-to-date information.

This setup is built to handle scaling data needs. For instance, a Series B startup with $10 million ARR and 500GB-2TB of transaction history can rely on Querio to handle complex queries, such as "monthly revenue by customer segment with year-over-year comparison", in just 1-2 seconds. The warehouse infrastructure manages the heavy lifting, ensuring performance remains steady even as datasets grow from gigabytes to terabytes.

And it doesn’t stop there - Querio’s reactive notebooks ensure that analysts stay in sync with every data update.

Notebooks That Update Automatically

Querio’s reactive notebooks simplify collaboration and keep analyses current. Any changes to a SQL query or Python transformation automatically refresh all related cells and visualizations. For instance, if the data engineering team updates a customer dimension table, analysts working on segmentation analyses are notified, and their notebooks reflect the updates instantly.

The platform also offers cell-level version control, which tracks every change with timestamps and allows users to roll back to earlier versions if needed. Real-time cursors and built-in comment threads make teamwork smoother, enabling analysts to collaborate effectively without missing a beat.

Advanced SQL with Python in Jupyter Notebook: Analyze Real-World E-commerce Data

5 Reasons Startups Choose Querio for SQL and Python Work

Startups thrive on agility, and Querio provides the tools to streamline analytics and decision-making. Here are five standout reasons why startups turn to Querio for their SQL and Python needs.

One Environment for All Analytics Work

With Querio, SQL and Python come together in a single notebook, removing the hassle of juggling multiple tools. Analysts can seamlessly write a SQL query in one cell to fetch customer data and then process it with Python in the next - all within the same platform [12].

The platform’s reactive setup ensures that any upstream changes, like updates to a table definition, automatically refresh dependent analyses. This means teams always work with the most up-to-date information, eliminating the need for manual updates.

AI Generates Queries from Plain English

Querio takes the complexity out of SQL writing. Analysts can simply ask a question like, "What's the customer retention rate by acquisition channel for Q1 2026?" and let the platform generate optimized SQL and Python code. Every line of generated code is fully visible for review and edits, ensuring transparency.

This feature has helped startups achieve reporting cycles 20 times faster [12]. Data teams can focus on strategic priorities while non-technical users independently handle simpler analyses, saving time across the board.

Consistent Metrics Across Teams

Querio's Context Layer makes inconsistent metric definitions a thing of the past. By defining key metrics - like "Active User" or "Monthly Recurring Revenue" - in a centralized semantic layer, those definitions automatically apply across all dashboards, analyses, and AI-generated queries.

Stored in version-controlled files [12], this setup ensures everyone in the organization is working with the same definitions, avoiding the chaos of manually updating spreadsheets or dashboards.

Grows with Your Team

Querio’s warehouse-native architecture integrates directly with major data warehouses such as Snowflake, BigQuery, and Redshift. Queries run on the warehouse’s infrastructure, ensuring performance remains strong even as datasets grow.

The platform also offers an unlimited viewer model, enabling startups to expand data access without worrying about escalating costs. Unlike traditional per-seat pricing, which can range from $6,000 to $50,000 annually, Querio’s flat-rate pricing keeps analytics budgets manageable for teams across marketing, sales, and customer success. This scalability supports startups as they grow, delivering insights without breaking the bank.

Faster Decisions with Live Data

Querio enables real-time analytics by eliminating delays caused by batch processing or scheduled updates. Analysts can query live data directly, with upstream changes automatically reflected in dependent analyses. This ensures dashboards and reports always display the most current information.

"A single person who can write a complex SQL query to define a customer cohort and then immediately build a churn prediction model for that group in Python can deliver a project in half the time it would take a siloed team." - Querio Blog [13]

This speed empowers startups to react quickly to trends and make informed decisions as they happen, a critical edge in fast-paced environments.

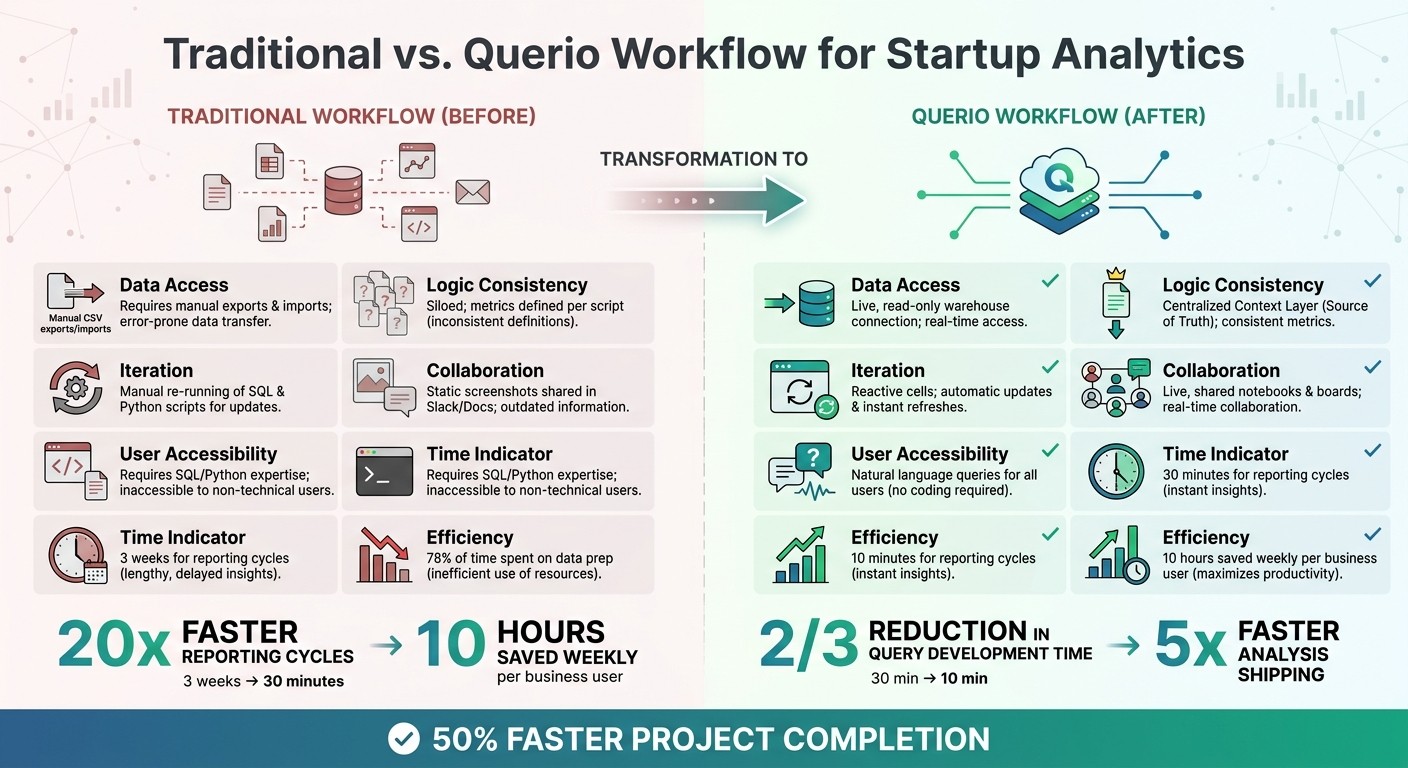

Before and After: How Querio Changes Startup Analytics

Traditional Analytics Workflow vs Querio: Time Savings and Efficiency Comparison

Workflow Comparison: Before and After Querio

Switching to Querio from scattered tools transforms how startups handle analytics. Previously, analysts at fast-growing startups juggled multiple tools - SQL clients, Python IDEs, BI platforms - manually exporting CSVs and rerunning scripts every time data changed. Enter Querio: an AI-powered notebook workspace where SQL and Python work together seamlessly. This shift eliminates the inefficiencies caused by fragmented tools and constant context switching, as mentioned earlier [12][13].

The impact? Reporting cycles that once dragged on for 3 weeks now wrap up in just 30 minutes [12]. Business users save around 10 hours weekly thanks to self-service analytics [12]. Meanwhile, analysts reclaim time previously lost to data prep and tool juggling - tasks that used to eat up 78% of their work hours [12].

Feature | Traditional Workflow (Before) | Querio Workflow (After) |

|---|---|---|

Data Access | Manual CSV exports/imports | Live, read-only warehouse connection |

Logic Consistency | Siloed; metrics defined per script | Centralized Context Layer (Source of Truth) |

Iteration | Manual re-running of SQL, Python | Reactive cells; automatic updates |

Collaboration | Static screenshots in Slack/Docs | Live, shared notebooks and boards |

User Accessibility | Requires SQL/Python expertise | Natural language queries for all users |

Querio’s unified workspace removes the hassle of manual data transfers and constant tool-switching. This streamlined process ensures faster, more reliable insights across the company.

Example: Complete Analysis Workflow in Querio

Let’s break down an analysis workflow in Querio. An analyst starts by connecting directly to their Snowflake data warehouse using secure, read-only credentials. First, they write a SQL query in one notebook cell to pull Q1 2026 customer data segmented by acquisition channel. In the next cell, they switch to Python to build a churn prediction model - seamlessly, in the same workspace.

Thanks to Querio's reactive cells, any upstream data changes automatically refresh the analysis [12][13]. The analyst defines "Active User" once in semantic context layer, ensuring that this definition stays consistent across all dashboards and AI-generated queries. This eliminates version drift when teams collaborate. Finally, the analysis is saved as a versioned .py file in Git and shared with the marketing team as a live dashboard - all within Querio [12].

This approach simplifies the entire process, from data querying to collaboration, making analytics faster, more accurate, and easier to manage.

Conclusion

What Fast-Growing Startups Should Know

Fast-growing startups don’t have time to juggle disconnected analytics tools. Moving to integrated SQL-Python notebooks isn’t just about upgrading technology - it’s about gaining a strategic edge. With these tools, analysts can query live warehouse data using SQL and immediately pivot to building predictive models in Python, all within the same workspace. This streamlined workflow can cut project completion times nearly in half compared to siloed methods. It’s not just about saving time; it’s about setting up a scalable analytics system that benefits the entire team.

The numbers don’t lie - current workflows are bogged down by inefficiencies and technical debt, as recent studies highlight [4][13]. Plus, the demand for professionals skilled in both SQL and Python is only expected to grow dramatically by 2034 [13]. Startups that embrace unified analytics workspaces not only improve their internal processes but also position themselves to attract top-tier talent.

This is where Querio comes in. By combining live warehouse connections, AI-driven query generation, and a centralized Context Layer, Querio eliminates inefficiencies. Business users can run analytics with plain English queries, while analysts work seamlessly in reactive notebooks that integrate SQL and Python. The result? Faster insights, better collaboration, and analytics that scale without creating technical debt.

For startups building their analytics capabilities, the message is simple: choose tools that unify your workflow. Avoid the pitfalls of context switching and manual data transfers. In today’s fast-moving world, teams that integrate SQL and Python are the ones that stay ahead.

FAQs

When should a startup switch to a SQL+Python notebook?

When a startup's data analysis demands exceed the capabilities of basic SQL queries, it’s time to consider switching to a SQL + Python notebook. This setup is perfect for combining data visualization, advanced analysis, and storytelling all within a single workspace.

Such a tool becomes especially useful when working with larger datasets, incorporating machine learning, or requiring quicker insights. The integrated environment streamlines workflows, making it easier to adapt and scale - something that’s crucial in the fast-moving world of startups.

How does a governed metrics layer prevent metric drift?

A governed metrics layer acts as a centralized semantic layer that standardizes how metrics are defined and calculated across an organization. By doing this, it prevents metric drift, ensuring that metrics remain consistent and accurate. This eliminates discrepancies that often arise from manual processes or varying interpretations of metric definitions.

Is it safe to query live warehouse data from a notebook?

Querying live warehouse data from a notebook can be done safely as long as robust governance, security protocols, and access controls are in place. Today's tools are designed to offer secure, real-time data access directly within notebooks, allowing analysts to work quickly and effectively while maintaining data integrity.

Related Blog Posts