10 Best AI for Python Coding in 2026: A Guide

Find the best AI for Python coding. Our 2026 review covers 10 top tools for data teams, from code completion to self-serve analytics, with pros and cons.

published

Outrank AI

best ai for python coding, ai coding assistants, python development tools, data engineering, querio

3ee5ac20-73c0-4ce0-8d91-ce0ce179a3fe

Monday starts with a familiar pattern. A product manager wants a churn cut by segment. Finance needs a revenue check before the board draft goes out. An analyst has a notebook that broke after a schema change. A data engineer is supposed to be hardening pipelines, but keeps getting pulled into ad hoc fixes. Teams do not usually buy an AI coding tool because Python is hard. They buy one because too much useful work is trapped behind the people who know the stack best.

That is the right frame for evaluating the best AI for Python coding. The question is not only which assistant writes the fastest function in VS Code or PyCharm. The primary question is where each tool sits in your workflow: individual code generation, repo-wide change management, cloud-specific development, privacy-sensitive environments, or a broader self-serve analytics model that reduces the volume of requests reaching the data team in the first place.

Python still sits at the center of that stack. It is the language teams already use for notebooks, Pandas cleanup, orchestration scripts, ETL jobs, APIs, and ML experiments. So the practical trade-off is not whether to add AI. It is whether your team needs better code completion, better context across a large codebase, stronger governance, or a product that complements a modern analytics layer instead of competing with it.

That distinction matters for data leaders. GitHub Copilot, Cursor, Claude Code, and similar tools can speed up engineering work inside the development environment. They do not solve the operating problem of every stakeholder routing basic data questions through analysts and analytics engineers. Tools like Querio address a different bottleneck by shifting question answering closer to the business, which changes how Python work gets prioritized across the team.

If you are comparing options across that broader developer stack, 12 Best AI Tools for Developers in 2026: Your Essential Stack is a useful reference point.

The rest of this guide looks at each tool through that team-level lens: who it helps, where it creates friction, and how it fits alongside a self-serve analytics setup rather than inside a feature checklist alone.

Table of Contents

1. Querio

A familiar data-team scene: a product manager needs a retention cut, finance wants a revenue view by segment, and an analyst ends up writing Python for a question that will be asked again next week. In that situation, the best AI for Python coding is not always the tool that completes functions fastest inside an editor. Sometimes the better choice is the one that moves routine analysis out of the ticket queue and into a governed workflow the business can use.

Querio fits that second category. It runs AI-assisted SQL and Python on top of the warehouse, with reactive notebooks that keep logic tied to live data instead of scattered across stale notebook exports and one-off scripts. For teams building a self-serve analytics stack, that makes Querio less of a coding copilot and more of an operating layer between the warehouse, the data team, and business users.

Why Querio belongs in a Python workflow list

Python work in analytics teams often starts long before anyone opens VS Code or PyCharm. The primary bottleneck is usually request handling, context switching, and repeated analysis that never gets turned into reusable assets. Querio addresses that part of the workflow by giving teams an interface where AI produces explicit SQL and Python that users can inspect, edit, and keep.

That transparency matters.

A lot of AI analytics products generate answers without showing enough of the underlying logic to review properly. Querio keeps the SQL and Python visible, which makes it easier to debug, standardize, and hand off across analysts, analytics engineers, and data leaders. Its notebooks also recompute when dependencies change, and the logic can be versioned as .py files in Git. That is a better fit for teams that care about code review and reproducibility than the usual copy-paste notebook culture.

I would treat Querio as a force multiplier for analytics engineering, not a replacement for IDE-based coding assistants. It helps when the team’s best Python users are stuck answering recurring business questions instead of building reusable models, metrics, and reporting logic. If that pain sounds familiar, Querio is solving a different problem than Copilot, Cursor, or Claude Code. The overlap is real, but the job to be done is different.

For teams assessing that shift, Querio’s own perspective on AI data analysis tools that write code for analysts is useful context.

Where Querio changes the operating model

Querio is strongest in warehouse-centered environments where the main goal is controlled self-serve analytics, not just faster code generation. It connects to common warehouses and databases, including BigQuery, Snowflake, Redshift, ClickHouse, Postgres, MySQL/MariaDB, SQL Server, and MotherDuck. It also supports embedding through iFrame, API, and MCP, which matters for teams that want to surface analytics inside internal tools or customer-facing products.

The practical advantage is that it gives different groups different levels of access to the same system. Business users can ask for answers. Analysts can inspect and refine the generated work. Engineers can version, review, and productionize logic instead of rebuilding the same request from scratch. In a modern analytics stack, that is often more valuable than shaving a few seconds off function completion inside an IDE.

Its Boards feature is also a sensible answer to a common self-serve failure mode. Exploration expands quickly, but very little becomes approved output. Boards let teams publish verified, embeddable reporting while keeping the underlying SQL and Python available for technical users who need to audit or extend it.

The trade-offs are straightforward:

Best fit for mature warehouse workflows: Querio works best when important data already lives in systems your team trusts and governs.

Requires setup and ownership: Permissions, warehouse connections, semantic context, and notebook organization need active management.

Less relevant for pure application engineering: If your Python work is mostly backend services, APIs, or local scripts, editor-first tools will matter more day to day.

Stronger as part of a stack than as a single standard: Data teams often get the most value by pairing Querio with an IDE assistant rather than trying to make one product cover every workflow.

That is the key strategic point. For solo developers, Querio may feel heavier than necessary. For product and data leaders trying to reduce analyst dependency while keeping code visible and reviewable, it can change how work moves through the team. If you are comparing team-wide AI investments, that makes Querio less a direct substitute for coding assistants and more a complement to them. For a broader market view, 12 Best AI Tools for Developers in 2026: Your Essential Stack is a useful companion read.

2. GitHub Copilot

For most Python teams, Copilot is still the default starting point. That isn’t hype. JetBrains’ 2024 State of Developer Ecosystem survey put GitHub Copilot at a 62% adoption rate among developers, as summarized in Augment Code’s analysis of AI coding tools for data science and ML. When a tool reaches that level of adoption, it becomes the baseline against which everything else gets judged.

The practical reason is simple. Copilot is everywhere your team already works: VS Code, JetBrains IDEs, GitHub repos, pull requests, and issue flows. For Python, it’s especially good at the repetitive but expensive work that fills real days, such as generating data wrangling boilerplate, cleaning up function signatures, drafting tests, or filling in standard framework patterns.

Where Copilot is strongest

Copilot works best for broad Python coverage, not narrow specialization. If your developers jump between application code, ETL jobs, utility scripts, notebooks, and deployment config, Copilot usually keeps up better than tools that shine only in one mode. It’s also one of the easiest products to roll out if your company already runs on GitHub.

That said, there’s a real limitation data teams feel quickly. Notebook workflows are messy. Context often lives across cells, comments, outputs, warehouse schemas, and external services. Copilot is strong inside a single coding surface, but that doesn’t always translate cleanly to exploratory analytics work.

Copilot is a very good assistant for Python developers. It is not a complete answer to notebook-heavy analytics operations.

That’s the decision point. If you want the safest team default, Copilot is hard to argue against. If your Python work is mostly Jupyter-heavy and tied to self-serve analytics, it may need to sit beside a system designed for warehouse-native analysis. If you're comparing that exact trade-off, this breakdown of AI Python copilots is worth reading.

A few practical pros and cons stand out:

Strong default choice: Broad IDE support and tight GitHub integration make rollout straightforward.

Good for mixed Python workloads: It handles everyday library usage and application code well.

Less comfortable in sprawling notebook context: Multi-cell reasoning is where teams start looking at alternatives.

Governance still matters: Model access, usage controls, and team policy need attention once adoption spreads.

3. Amazon Q Developer

A common team scenario looks like this. Python code is spread across Lambda handlers, Boto3 scripts, Step Functions glue, CI jobs, and internal tools that exist mainly to keep AWS infrastructure running. In that setup, Amazon Q Developer is usually better judged as an AWS assistant than as a pure Python copilot.

That distinction matters for data teams. If your analysts and analytics engineers are writing Python that reaches into S3, Athena, Redshift, Glue, IAM-governed services, or internal platform APIs, Q can reduce the amount of AWS-specific syntax and service knowledge people need to hold in their heads. It is often less impressive in generic Python work than in cloud-heavy operational code. That is the trade-off.

Best fit for teams where Python is tightly coupled to AWS

Amazon Q Developer makes the most sense when the hard part of the job is not Python itself. The hard part is working safely inside AWS. Permissions, service boundaries, deployment conventions, and infrastructure sprawl shape whether the tool saves time.

That is why product and data leaders should evaluate Q differently from a solo developer picking a coding assistant for local scripts. In an AWS-first company, Q can fit naturally into platform standards and security reviews. In a mixed environment, it can feel narrow.

For teams running a self-serve analytics stack, the key question is not whether Q writes Python. Several tools on this list can do that. The central question is where it fits beside a system like Querio. Querio helps business teams work directly with warehouse data and analytics workflows. Amazon Q helps more when the work shifts into service integration, automation, deployment logic, and AWS-specific engineering tasks. Those tools can complement each other, but they solve different bottlenecks.

A few practical pros and cons stand out:

Strong fit for AWS-native engineering work: It is more useful when Python code regularly touches Lambda, Boto3, IAM-scoped services, and internal cloud tooling.

Better for platform-aware teams than notebook-first analysts: If the team spends more time in Jupyter than in AWS service code, the value drops quickly.

Governance enters the discussion early: Security review, admin controls, and usage boundaries matter more here than with a lightweight solo-developer tool.

Adoption depends on workflow maturity: Teams with established AWS conventions usually get more value than teams still standardizing how they build and deploy Python services.

There is also a workflow shift worth paying attention to. Analysts are writing more production-adjacent Python than they used to. Once that work starts touching orchestration, cloud storage, service credentials, or lightweight apps, the line between data work and platform work gets thinner. If that is happening in your team, this piece on AI agents for analysts explains why the tooling decision starts affecting more than just engineers.

Amazon Q Developer is not the universal answer for the best ai for python coding. For AWS-heavy organizations, though, it can be the most practical answer because it matches the runtime environment of the code.

4. Google Gemini Code Assist

Gemini Code Assist makes the most sense when your Python stack already leans heavily on Google Cloud. If your engineers live in GCP, your data stack leans on BigQuery, and your ML workflows touch TensorFlow or Colab Enterprise, Gemini lands differently than it does for a general-purpose software team.

That ecosystem fit shows up in the way people describe it. Background research in the provided material highlights Gemini Code Assist for deeper ties to BigQuery and TensorFlow in cloud-based pipelines. That doesn’t automatically make it the best tool overall, but it does make it easier to justify for teams already standardized on Google infrastructure.

Good when Python lives inside Google Cloud

For Python data teams, Gemini’s appeal is less about editor novelty and more about reducing friction between code generation and cloud execution. A team working across notebooks, data pipelines, APIs, and warehouse workflows inside Google Cloud usually cares about governance just as much as convenience. Gemini’s enterprise posture supports that conversation.

This is also one of the clearer choices for organizations that don’t want to bolt together separate products for code help, cloud workflows, and access control. When those concerns are already centralized in Google, Gemini is easier to operationalize.

Consider it this way:

Good for GCP-centered development: Especially where Python intersects with BigQuery, cloud services, and managed ML workflows.

Security-conscious teams will like the posture: Governance matters more here than in indie developer tools.

Less compelling for mixed-cloud teams: If your stack is spread across providers, the ecosystem advantage weakens.

Enterprise buying motion comes with it: This usually isn't the scrappy team’s first experiment.

For self-serve analytics teams, Gemini is useful if your "Python coding" problem is tightly connected to a Google-native data platform. If your bigger bottleneck is business users waiting on analysts, you'll still need to solve the workflow layer above the IDE.

5. JetBrains AI Assistant

If your team writes Python in PyCharm all day, switching editors just to get better AI is usually a bad trade. JetBrains AI Assistant exists for teams that want help inside the tooling they already trust, with code explanations, refactors, test generation, and newer agent-like workflows integrated into the JetBrains environment.

This option tends to appeal to experienced Python developers more than hype-driven adopters. They already like the inspections, debugger, and project structure awareness in JetBrains tools. They want AI to improve that workflow, not replace it.

The PyCharm-first option

JetBrains AI Assistant is especially practical for teams that don’t want to standardize on one external model vendor. Its provider flexibility and enterprise options give engineering leaders more room to decide how they want usage governed. That matters in larger companies where model policy changes faster than IDE policy.

The downside is that credits and quota models can get annoying for heavy users. If one group uses AI for small code explanations and another uses it aggressively for generation and test drafting, the experience can diverge quickly unless someone manages the rollout.

A lot of tooling decisions fail because leaders compare raw capability and ignore change management. JetBrains AI Assistant wins whenever "stay in PyCharm" matters more than chasing the newest AI editor.

That’s why I’d place JetBrains AI Assistant high for established engineering teams and lower for greenfield startup teams. If your Python culture already runs through JetBrains, it’s one of the easier upgrades to absorb. If your team is editor-agnostic and hungry for agentic multi-file changes, others may feel faster.

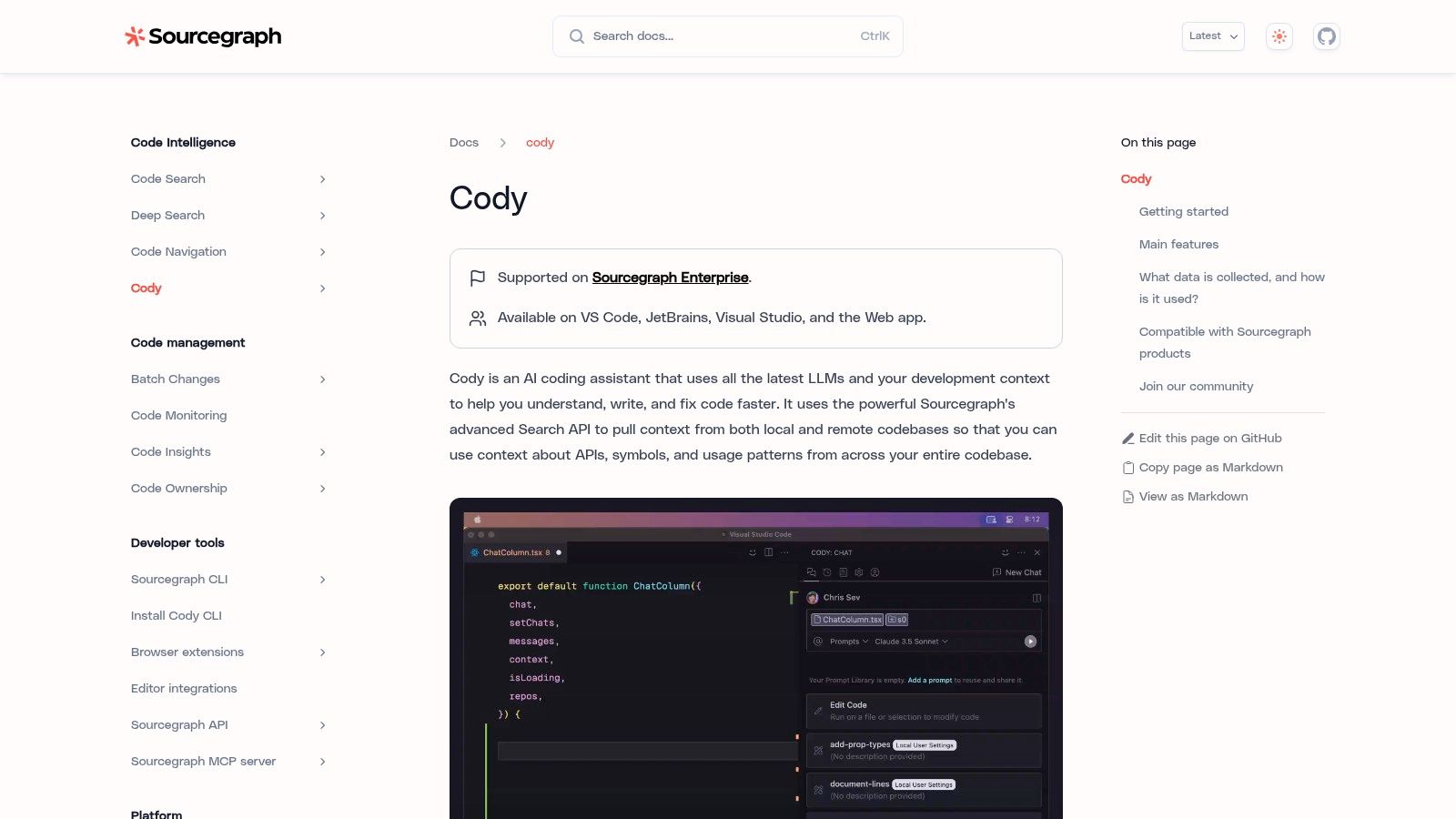

6. Sourcegraph Cody Enterprise

Sourcegraph Cody is not the casual pick. It’s the enterprise pick for organizations with large codebases, complicated ownership boundaries, and a real need for repository-level grounding. If you’ve got multiple Python services, shared libraries, internal platforms, and a lot of code search pain, Cody solves a different problem than lightweight inline assistants.

That’s why teams evaluating best ai for python coding at scale should keep it on the list even if it feels heavier than editor-first products. Some organizations don’t need another autocomplete tool. They need a better way to understand what exists across the company.

Built for large codebases, not casual use

Cody is strongest when monorepo sprawl and org-wide code navigation are already bottlenecks. In those environments, deep context and code search infrastructure matter more than sleek consumer packaging. The ability to bring your own model provider also matters because procurement, privacy, and cost controls rarely line up with a one-vendor strategy.

This isn’t a self-serve product in the same sense as many others on this list. The buying motion is enterprise-oriented. That’s a disadvantage for small teams and a feature for larger ones.

A few practical takeaways:

Best for codebase comprehension: Strong choice when Python exists across many repos or services.

Helps senior engineers and platform teams most: The value grows with codebase complexity.

Not ideal for individuals testing AI casually: The enterprise focus is real.

Model flexibility is strategic: BYO-LLM options can reduce lock-in and fit internal policy better.

If your Python team keeps asking "where is this logic defined?" Cody is relevant. If they’re just trying to draft utility functions faster, it’s probably overkill.

7. Tabnine

Tabnine earns its place for one reason many rankings underplay: deployment control. A lot of teams asking about the best ai for python coding are not choosing in a vacuum. They’re choosing under privacy reviews, internal security policies, and uncomfortable questions about what can leave the network.

The supplied research explicitly flags security and privacy as an underserved angle in AI Python tooling and points to Tabnine as a local or on-prem option that’s often brought up by privacy-conscious teams in CodingCops’ coverage of top AI Python tools. That’s the right lens.

The privacy-first choice

Tabnine is a strong fit when legal, compliance, or customer commitments narrow your deployment options. It supports SaaS, VPC, on-prem, and air-gapped styles of deployment, which changes the buying conversation entirely. Suddenly the question isn’t just "which model writes nicer code?" but "which tool can we approve?"

That said, privacy posture doesn’t automatically mean best coding experience. Teams should be honest about that trade-off. In many environments, Tabnine wins because it’s viable, not because every engineer prefers it over consumer-favorite tools.

A practical framing helps:

Best for strict environments: Strong option when code handling rules cannot be compromised.

Good organizational controls: Governance and deployment flexibility are the main draw.

May involve more setup and vendor coordination: Especially compared with self-serve tools.

Worth comparing against broader Python workflows: Security is one dimension, not the whole decision.

If you’re weighing privacy-first coding assistants against more mainstream options, this guide to AI Python tools provides a useful adjacent view. For regulated teams, Tabnine often survives the review process where flashier tools don’t.

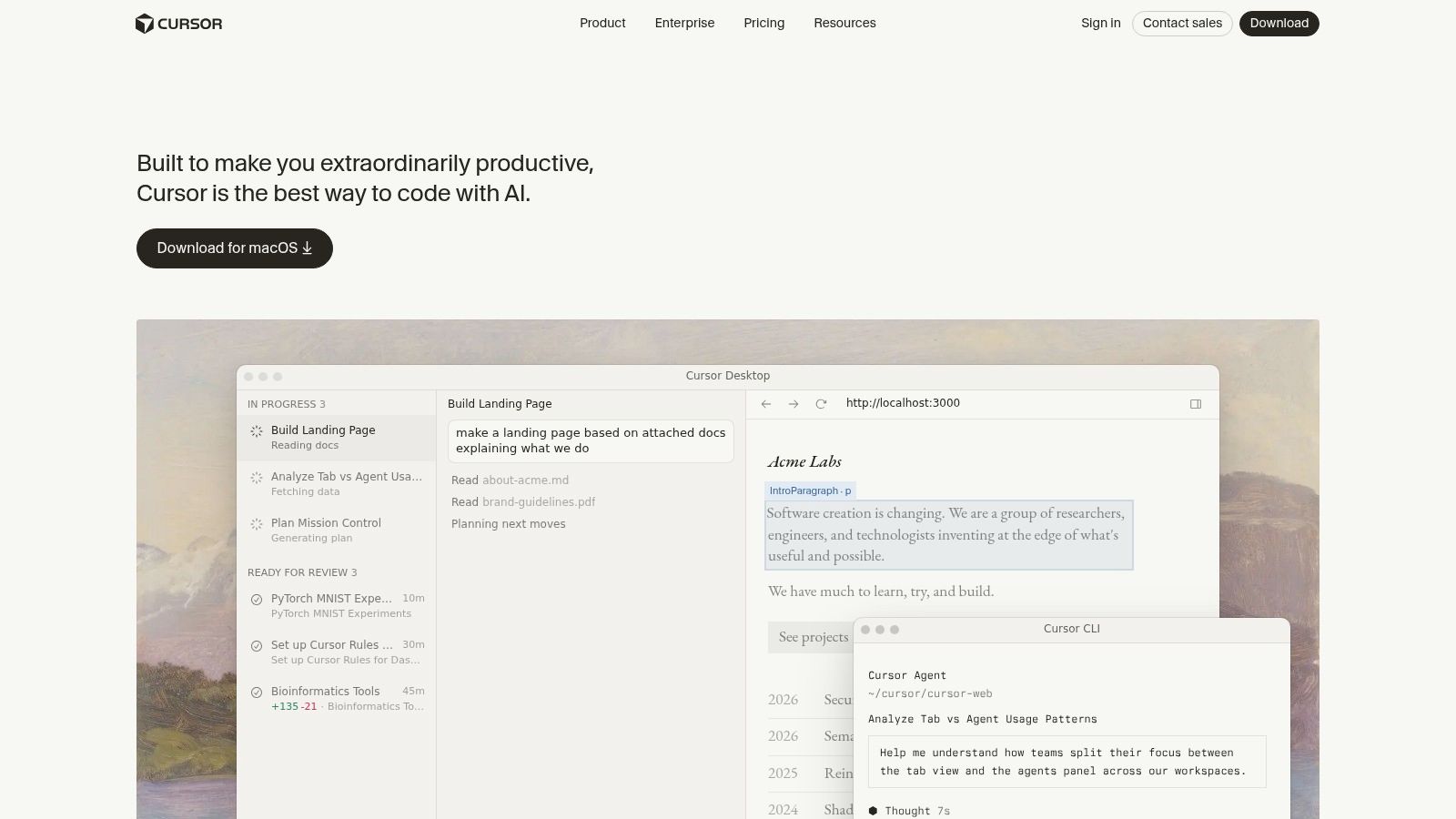

8. Cursor

Cursor has become the serious alternative for developers who want an AI-first editor rather than an add-on. In the verified material, Cursor is described as capturing 18 to 25% market share by revenue and reaching $2B ARR by February 2026 in UVIK’s AI coding assistant statistics roundup. More important than the revenue signal is what it says about product fit. A lot of power users want stronger multi-file reasoning than mainstream assistants usually provide.

For Python, that matters in real workflows: FastAPI backends, Django apps, refactors that span config and services, test updates across folders, and notebook-adjacent codebases that don’t live cleanly in one file.

Strong for multi-file Python work

Cursor shines when your task is larger than "finish this line" but smaller than "rethink our entire platform." It’s good at the kind of editing experienced engineers often push off because it’s tedious rather than difficult. Rename patterns, move abstractions, wire through changes, and update neighboring files without forcing you into a giant manual search session.

Its biggest adoption hurdle isn’t capability. It’s switching behavior. Teams have to accept a custom editor, new billing controls, and a different relationship with their development environment. Some engineers will love that. Others will resent it.

If your Python work regularly spans multiple files, Cursor often feels more ambitious than traditional copilots. Whether that ambition helps or annoys depends on how much editor change your team will tolerate.

That’s also why Cursor is worth discussing alongside analytics tooling. Data leaders often frame SQL versus Python as a language question, but in practice it’s a workflow question. This SQL vs Python discussion is relevant because Cursor tends to be most valuable when Python work grows beyond isolated scripts into system-level changes.

I’d recommend Cursor to strong individual contributors and engineering-heavy startups first. For broad enterprise standardization, rollout friction matters more.

9. Windsurf

Windsurf is for teams that want AI to feel native to the editor, not bolted on afterward. Its Cascade workflows and multi-file editing make it attractive for fast-moving engineers who want to prototype, refactor, and iterate without constantly context-switching between chat, files, and terminal output.

In the background material, Windsurf is also called out for agentic debugging in notebook and terminal-adjacent scenarios. That’s important because some Python work isn’t just writing code. It’s handling the messy loop of run, fail, inspect, patch, rerun.

Fast iteration with an AI-native editor

Windsurf’s strength is speed of interaction. Greenfield Python work, exploratory internal tools, and broad refactors are where it tends to feel helpful. Teams that already like AI-native editors often find it easier to stay in flow here than with more conservative assistants.

But the same design can create friction in established organizations. A dedicated AI IDE is a larger adoption ask than a plugin. Security review, support expectations, and team consistency all become harder once the tool is also the editor.

Here’s the honest trade-off set:

Fast for prototyping: Especially useful when code is changing quickly.

Good for cross-file edits: Helpful for broad implementation passes.

Harder to standardize in conservative orgs: New editor adoption is never free.

Best for teams comfortable experimenting: Less ideal where process and predictability dominate.

Windsurf is often a good fit for startups, skunkworks projects, and developers who want to push AI workflows harder. It’s less obviously the right answer for a data organization trying to institutionalize reliable analytics processes.

10. Anthropic Claude Code

Claude Code appeals to a specific kind of engineer. The ones who are comfortable in terminal workflows, want strong reasoning over code changes, and don’t need a heavy visual wrapper to trust the tool. For Python, that can be a very effective combination because so much real work still happens in shells, test runners, local scripts, and repo-level edits.

It also fits teams that already standardize on Claude models through enterprise providers. That removes some procurement and policy friction that would otherwise slow adoption.

Best for engineers who like terminal workflows

Claude Code is strongest when the engineer driving it is already disciplined. Clear prompts, explicit constraints, and review habits make a big difference. In return, it’s often very effective at understanding modules, proposing edits, and stepping through code changes with interactive diffs and checkpoints.

That doesn’t make it the universal best ai for python coding. Teams that need broad standardization across mixed skill levels may prefer tools that feel more familiar inside mainstream IDEs. Claude Code is often better as a power tool than as a lowest-common-denominator team standard.

A few practical notes:

Great for terminal-heavy developers: Especially those comfortable reviewing diffs and guiding agents.

Good reasoning on Python modules: Strong fit for code understanding and rewriting tasks.

Needs disciplined use: Loose prompting produces loose results.

Pricing and access vary by deployment path: Teams should decide whether they want direct subscriptions or enterprise provider integration.

For teams going deep on the Claude ecosystem, this developer guide to the Anthropic Claude API is a useful complement. Claude Code isn’t the safest default for every organization, but strong engineers can get a lot out of it.

Top 10 AI Tools for Python Coding, Feature Comparison

Product | Core features | Target audience | UX & integrations | Unique strengths / Pricing & deployment |

|---|---|---|---|---|

Querio (Recommended) | AI coding agents on your warehouse; reactive Python notebooks; versioned .py context; embeddable Boards | Product teams, analysts, non‑technical stakeholders, mid‑market companies | AI chat sidebar; recompute like a spreadsheet; connects BigQuery/Snowflake/Redshift/ClickHouse/Postgres/MySQL/SQL Server/MotherDuck; iFrame/API embeds | Self‑serve analytics replacing Hex/Looker; governance + audited code; claimed 20x faster cycles; free trial/demo (pricing via demo) |

GitHub Copilot | Inline completions; Copilot Chat; PR and repo reasoning | Python dev teams using GitHub | Deep GitHub + IDE integrations (VS Code, JetBrains, Neovim, Visual Studio) | Best for GitHub workflows; enterprise controls; subscription with premium request quotas |

Amazon Q Developer | IDE/CLI assistant; modernization agents; agentic requests | Teams building/deploying on AWS | Integrates with AWS tooling and CLI; admin controls | AWS‑centric tooling and governance; Pro pricing and IP indemnity; watch per‑line overages |

Google Gemini Code Assist | Inline completions, code gen, chat; enterprise governance | GCP‑centric dev and data teams | Integrates with Firebase, Apigee, Cloud Workstations, Colab Enterprise | Enterprise security (VPC, IAM); best value on Google Cloud; enterprise licensing |

JetBrains AI Assistant | In‑IDE chat; code explanations; test generation; refactors | Developers using JetBrains IDEs (PyCharm, IntelliJ) | Native JetBrains integration; BYO API key and multiple model options | Flexible provider routing; quota/credit model; IDE‑native experience |

Sourcegraph Cody (Enterprise) | Repo‑level grounding; enterprise code search; deep context | Large orgs with monorepos and complex codebases | IDE extensions + web app; BYO LLM support (Azure, Bedrock, etc.) | Excellent for org‑wide code understanding; enterprise sales only |

Tabnine | Inline & multi‑line completions; in‑IDE chat; multiple deployment modes | Teams needing strict privacy/compliance | Supports major IDEs; works with many LLM providers; VPC/on‑prem/air‑gapped | Strong privacy/compliance and deployment flexibility; enterprise pricing |

Cursor (AI code editor) | AI‑first editor; multi‑file edits; agentic workflows | Python developers wanting VS Code‑like AI editor | VS Code–compatible editor; team billing and admin controls | Built‑in AI editor experience; smooth multi‑file workflows; adoption effort vs existing editors |

Windsurf (formerly Codeium) | Agent‑driven multi‑file edits (Cascade); premium models | Individuals and teams prototyping / refactors | Dedicated AI IDE; free + Pro/Teams/Enterprise tiers | Generous free tier; fast cross‑file refactors; enterprise features via sales |

Anthropic Claude Code | VS Code extension; interactive diffs & checkpoints; CLI | Teams standardized on Claude models | Desktop/web/CLI options; integrates via Claude subscriptions or enterprise providers | Strong Python reasoning and rewrite flows; multiple deployment/integration paths; subscription/API pricing |

The Future is Collaborative AI as Your Team Multiplier

Monday morning, the data lead is triaging dashboard requests, a staff engineer is cleaning up Python glue code between services, and an analyst is stuck waiting on someone to explain business logic buried in an old notebook. Those are three different bottlenecks. No single AI coding tool fixes all of them.

That is the defining frame for choosing the best AI for Python coding in 2026. Model quality matters, but team design matters more. A solo developer usually cares about speed inside the editor. A platform team cares about governance, codebase context, and rollout risk. A data leader cares about whether AI reduces ticket volume or just helps people write the same backlog faster.

For individual throughput, the trade-offs are fairly clear. Copilot remains the easiest default for many teams because adoption friction is low. Cursor and Windsurf are stronger when engineers want editor-native workflows built around multi-file changes, but switching editors is still a real organizational cost. JetBrains AI Assistant fits teams that already live in PyCharm. Claude Code suits engineers who work heavily in the terminal and want tighter control over rewrites and review steps. Tabnine enters the conversation early when legal, privacy, or deployment constraints rule out more consumer-style setups.

At the team and platform level, the buying criteria change. Amazon Q Developer, Gemini Code Assist, and Sourcegraph Cody tend to make more sense when the challenge is standardization across larger environments. They are often easier to align with cloud controls, repository context, procurement requirements, and internal governance. Engineers may not rank them highest for day-one excitement. Leaders often rank them higher for rollout feasibility.

Data teams should be even more selective.

A Python assistant can help write transformation code, tests, and notebook logic. It usually does not fix the operating problem underneath, which is that business users still depend on analysts for routine answers. If product managers, finance, and customer teams are waiting in a queue for basic reporting, faster code generation inside VS Code or PyCharm improves one layer of the workflow, not the whole system.

That is why Querio belongs in the same evaluation, even though it is solving a different part of the stack. In practice, it complements coding assistants more often than it competes with them. Engineers can keep using Copilot, Cursor, or Claude Code for code authoring. The analytics layer then handles a separate problem: making warehouse-native SQL and Python workflows accessible, governed, and reusable across the business.

The strongest setups I have seen use both. Teams use coding assistants to reduce repetitive development work, then pair that with a self-serve analytics workflow that removes avoidable analyst requests. That combination improves more than developer speed. It improves how the company gets answers.

So the final decision should map to the constraint you have. If your issue is personal coding velocity, pick the assistant that fits your editor, privacy requirements, and review process. If your issue is enterprise control, favor the tools your platform team can support without workarounds. If your issue is that analysts are acting as a reporting help desk, add an analytics product that changes the workflow instead of asking AI to patch around it.

If your data team is buried in ad hoc requests and analysts are spending their week answering the same questions in slightly different forms, Querio is worth a serious look. It brings AI coding agents and reactive Python notebooks directly to the warehouse, so teams can shift from one-off reporting to governed self-serve analytics without hiding the underlying SQL and Python.