Business Intelligence

Replace your analyst with AI: what actually breaks and what gets better

AI speeds and standardizes analytics but often misses context, trust, and edge cases — the best outcomes come from human+AI oversight.

When companies replace human analysts with AI, the results are mixed. AI tools can automate repetitive tasks, analyze large datasets quickly, and reduce costs. However, they often lack the context, judgment, and domain knowledge that human analysts bring to the table. Here's the breakdown:

What AI improves:

Speed and scale: Processes complex queries in seconds, handles multiple tasks simultaneously.

Consistency: Ensures uniform definitions for metrics, reducing discrepancies.

Predictive insights: Provides forecasts and alerts for emerging trends.

What AI struggles with:

Context and nuance: Misinterprets business-specific terms or metrics.

Trust issues: Outputs can appear correct but contain subtle errors.

Edge cases: Fails to handle rare or unpredictable scenarios effectively.

The best approach combines AI's efficiency with human oversight. Analysts evolve into roles like "AI supervisors", focusing on strategy and validation while AI handles routine tasks. Tools like Querio balance speed and control by generating transparent, editable code and applying consistent business logic through a semantic layer. This hybrid model maximizes productivity without sacrificing accuracy.

Are AI Analysts Replacing Data Analysts? The Truth

What Breaks Without Human Analysts

AI can auto-generate SQL queries in no time and handle massive datasets, but there are areas where it simply can't match the expertise of human analysts. These gaps can lead to expensive errors, a lack of confidence in AI-generated results, and decisions that, while technically accurate, miss the mark when it comes to actual business needs. Without human oversight, critical misinterpretations and inefficiencies become all too common.

Loss of Context and Domain Knowledge

AI might understand general terms like "churn", but it struggles with the nuances that are unique to your business. This is where things like semantic instability come into play. AI models often reinterpret key business terms with every query, leading to inconsistent calculations. For example, metrics like "active user" or "quarterly revenue" can vary wildly depending on how the AI processes them.

Take this example: A retail company saw its "quarterly growth" metric swing by as much as 40% because the AI misinterpreted date boundaries. Similarly, a SaaS company reported a 23% fluctuation in customer counts based solely on whether the AI chose a LEFT or INNER join for the query [5].

The root issue? AI operates largely without memory. Each query starts fresh, without the institutional knowledge that human analysts bring to the table [9].

Black-Box Outputs and Trust Issues

AI outputs often look convincing, but subtle errors can lurk beneath the surface - errors that are easy to miss but can mislead decision-makers. This creates a troubling accountability gap. When something goes wrong, there's no clear person to answer for the mistake.

A real-world example: In late 2025, Salesforce faced this issue when customer Vivint reported that AI agents failed to perform simple tasks like sending customer satisfaction surveys. The problem? The agents silently stopped working after receiving just eight instructions. Salesforce CTO Muralidhar Krishnaprasad confirmed the issue and responded by implementing stricter safeguards and increasing human oversight [6].

The complexity of workflows only makes trust harder to build. Even if each step in a 20-step analytical process is 95% reliable, the overall success rate drops to just 36% [8]. It's no wonder that about 80% of companies using generative AI reported no material benefits - most simply didn't trust the outputs enough to act on them [8].

Failure to Handle Edge Cases

When it comes to rare or unpredictable situations, human judgment becomes indispensable. AI struggles with cases that fall outside its training data. For instance, in industrial predictive maintenance, AI flagged false alerts caused by vibrations from nearby machinery. Without human intervention, teams wasted time chasing non-issues, ultimately losing faith in the system [7].

This problem extends to business analytics too. AI can't perform a "smell test" or apply common sense to spot results that don't make sense. Imagine your cost-per-acquisition suddenly dropping by 80% overnight. A human analyst would immediately suspect a tracking error, but AI might interpret it as a great result.

AI also misses external factors - like a competitor's bold market move, an internal decision to close a target account early, or the subtle tone in a CEO's cautiously optimistic guidance. In February 2025, Michael Schopf, CFA, tested six AI models on SWOT analyses for companies like Deutsche Telekom and Daiichi Sankyo. While the AI identified some risks, it completely missed the nuances in management's language - details that seasoned analysts picked up through direct conversations. Schopf summed it up perfectly:

"Nothing replaces talking to management to understand how they really think about their business" [10].

AI also struggles with rare events or new machinery, where historical data is sparse. Bearings, for example, account for 40% of all equipment failures in industrial settings [7]. AI trained on standard conditions often fails to predict breakdowns during unusual stress. Here, human analysts are essential to interpret and contextualize the data, ensuring nothing critical gets overlooked.

What Gets Better with AI

AI brings undeniable advantages to the table, particularly in speed, scale, and consistency. These aren't just minor tweaks - they represent a whole new way of working with data, making it faster and easier for teams to access and use information effectively.

Speed and Scale

AI tools like Querio can answer complex questions in minutes instead of days. You simply pose your query in plain English, and the system generates the necessary query, runs it on live warehouse data, and delivers the answer - all while you’re still brainstorming your next move.

This rapid turnaround doesn’t compromise accuracy. Unlike manual analysis, which is vulnerable to human error and fatigue - especially under tight deadlines - AI automates tasks like data preparation and error-checking. This reduces mistakes caused by rushing or multitasking, saving organizations from the hidden costs of poor data quality and rework.

On top of speed, AI’s scalability is unmatched. A single analyst can only juggle so many tasks before hitting a limit. AI, however, can handle multiple requests across an entire organization without breaking stride. It pulls real-time data directly from live warehouse connections, ensuring that teams are always working with the most current information - not outdated exports from last week.

This operational efficiency naturally supports a unified framework, ensuring everyone in the organization is on the same page.

Consistency Through Shared Definitions

AI doesn’t just work faster - it also ensures uniformity and trust through governed data definitions.

In traditional analytics, "shadow metrics" are a common issue. Different teams often calculate the same metric in different ways. For example, Marketing’s definition of "active user" might not align with Product’s, and Finance could have yet another version. This leads to conflicting reports, endless meetings to reconcile numbers, and decisions based on whichever version seems most convincing.

AI platforms solve this problem by implementing a governed semantic layer. The data team sets business logic, joins, and metric definitions once, and these are applied consistently across all queries, dashboards, and analyses. So, when someone asks about "monthly recurring revenue", the AI applies the same calculation every time - no room for interpretation, no unexpected variations.

This consistency builds trust. When everyone knows the numbers come from the same source logic, debates shift from “Whose numbers are correct?” to “What should we do about this?” That’s when data becomes a truly valuable tool for decision-making.

Predictive and Forward-Looking Analysis

Traditional business intelligence often focuses on historical data. Dashboards typically show what happened last month or last quarter. By the time issues are identified, the opportunity to act has often passed.

AI changes the game by enabling predictive and prescriptive analytics. Instead of just looking at past performance, platforms like Querio provide dynamic forecasts and proactive alerts. Real-time processing highlights trends as they emerge, not weeks later. Automated alerts flag anomalies early, giving teams time to address potential problems before they escalate.

This shift to forward-looking analysis means you can anticipate challenges and opportunities, rather than just reacting to them. And the best part? You don’t need to be a data scientist to benefit. Simply ask questions in plain English, and the AI handles the complex modeling - delivering forecasts, identifying patterns, and even suggesting next steps based on the data.

Human Analysts vs. AI: Direct Comparison

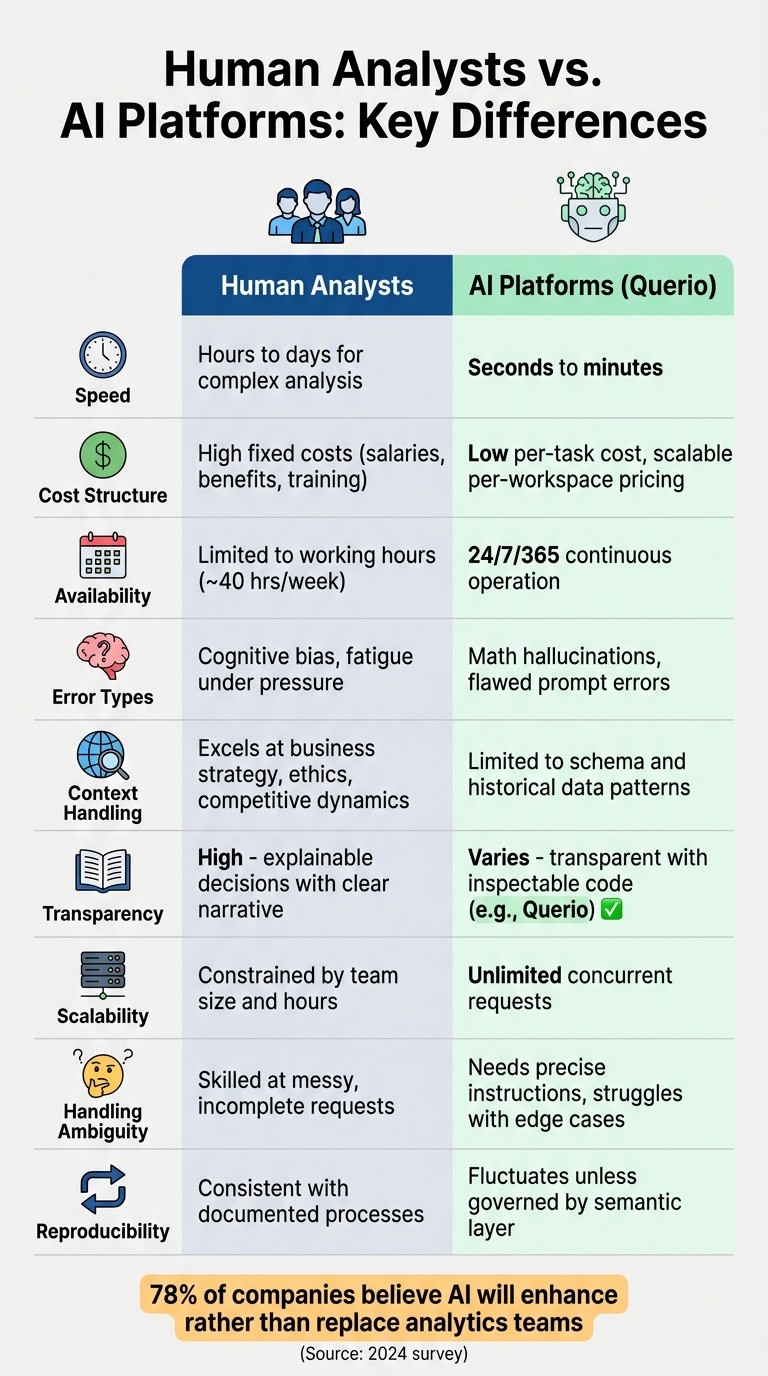

Human Analysts vs AI Platforms: Key Differences in Speed, Cost, and Capabilities

Human analysts and AI systems each bring unique strengths to the table, offering complementary benefits in the world of analytics.

As Elvira Nassirova, Lead Analytics Engineer at Coupler.io, aptly explains:

"AI isn't inherently superior - it simply accelerates the surrounding tasks." [2]

This perspective highlights the importance of pairing AI's efficiency with the nuanced judgment of human analysts. Let’s dive into a detailed comparison to see how they differ.

Key Differences: Human Analysts vs. AI Platforms

Feature | Human Analysts | AI Platforms (Querio) |

|---|---|---|

Speed | Takes hours to days for complex analysis | Processes queries in seconds to minutes |

Cost Structure | High fixed costs, including salaries, benefits, and training | Low per-task cost with scalable, per-workspace pricing |

Availability | Limited to standard working hours (e.g., 40 hours per week) | Operates continuously, 24/7/365 |

Error Types | Prone to cognitive bias and fatigue, especially under pressure | Susceptible to "math hallucinations" and errors from flawed prompts |

Context Handling | Excels at understanding business strategy, ethics, and competitive dynamics | Limited to schema and historical data patterns |

Transparency | High - decisions are explainable and tied to a clear narrative | Varies - transparent when code is inspectable (e.g., Querio), less so otherwise |

Scalability | Constrained by team size and working hours | Handles unlimited concurrent requests effortlessly |

Handling Ambiguity | Skilled at managing incomplete or messy requests | Needs precise instructions and struggles with edge cases |

Reproducibility | Consistent when processes are well-documented | Results can fluctuate unless governed by a robust semantic layer |

These distinctions highlight why blending AI's speed with human expertise creates the most value as part of a data analysis strategy. In fact, a 2024 survey revealed that 78% of companies believe AI will enhance rather than replace their analytics teams [3].

How Querio Combines AI Speed with Human Control

Querio blends the quickness of AI with the oversight of human judgment. It delivers rapid answers - often in seconds - while keeping analysts in control through inspectable outputs, centralized business logic, and direct connections to data warehouses, addressing the trust issues that trouble many AI tools.

Unlike opaque AI platforms, Querio generates SQL and Python code that’s fully transparent and editable. This ensures every query can be audited or adjusted as needed. For example, when a US hospital network faced unreliable patient readmission predictions from black-box AI tools, Querio's inspectable SQL allowed analysts to review JOINs on electronic health records (EHR) data. They fine-tuned queries for HIPAA compliance and accounted for domain-specific factors like seasonal flu trends. The result? Stakeholder skepticism dropped from 70% to 15%, and prediction accuracy jumped to 92% [1][8].

Querio’s semantic layer acts as a shared business glossary, integrated directly into your data warehouse. By defining terms like "customer lifetime value" or "Q1 revenue" once - formatted for US standards (e.g., $1,234.56, MM/DD/YYYY) - Querio ensures this logic is applied consistently across all queries. An e-commerce company, for instance, resolved 85% of context-related query errors by pre-defining over 200 metrics, enabling AI to scale insights across millions of SKUs [3][7].

With direct connections to Snowflake, BigQuery, and Databricks, Querio avoids the pitfalls of stale data syncing. Real-time validation ensures accuracy without moving data. A US fintech firm, for example, cut report generation time from two hours of manual SQL to just 30 seconds, maintaining 99.9% accuracy thanks to live warehouse integration. As Querio CEO Rayyan Islam puts it:

"Querio lets AI do the heavy lifting while humans retain the wheel - perfect for enterprise BI" [12].

These real-time capabilities highlight how Querio's features can be maximized when paired with thoughtful practices.

Best Practices for Using Querio

To fully leverage Querio’s strengths, certain best practices can help ensure optimal results.

Start by focusing on data quality at the warehouse level. Querio integrates seamlessly with validation tools like Great Expectations, which flags anomalies before AI queries are run. For example, a manufacturing company reduced data errors by 65% by scheduling weekly reviews of their semantic layer, ensuring reliable outputs for executive decisions [1][7].

Another key step is to align AI with clear business goals. Use Querio's semantic layer to map out KPIs and apply the 80/20 rule: let AI handle 80% of routine tasks, such as daily sales reports, while reserving 20% of complex scenarios - like market entry forecasts - for human review. A SaaS company using this approach tripled their BI delivery speed while maintaining nuanced insights, such as regional pricing adjustments [4][5].

Lastly, keep humans involved in strategic decisions. For high-stakes questions like "How will tariff changes affect the supply chain?" route the AI’s draft analysis to human teams for review. Train staff to use natural language queries for routine tasks, set strict AI guardrails in the semantic layer to block invalid queries, and track adoption through Querio dashboards. This hybrid model has been shown to boost productivity by 50% while reducing AI edge-case failures by 90% [2][6].

How Querio's Features Solve AI Weaknesses

When these practices are implemented, Querio’s capabilities shine in addressing common AI challenges.

For instance, inspectable code generation directly tackles trust issues. While most AI tools achieve only 60–70% accuracy on BI tasks, Querio reaches over 95% by allowing analysts to verify and refine SQL and Python outputs. This approach speeds up query validation by 40% [1][3].

The semantic layer resolves gaps in context and domain knowledge. Instead of AI inconsistently interpreting metrics, Querio enforces pre-defined terms with US-specific conventions like currency symbols, imperial measurements, and fiscal calendars. This eliminates the “shadow metrics” problem, where conflicting dashboards arise from inconsistent calculations. By addressing these issues, Querio resolves 92% of edge cases that typically trip up AI analytics tools, which often fail 30–50% of the time [3][7][11].

Finally, live warehouse connections combat the black-box nature of AI outputs. Querio validates queries in real time against your warehouse schema, showing the exact SQL it will execute and letting you make edits before finalizing. Companies using Querio report 99% uptime compared to 85% for tools relying on ETL processes, with no trust incidents over six-month spans [2][5].

Conclusion: Understanding the AI Analytics Trade-offs

AI is transforming how data work gets done. It excels at tasks like data cleaning, generating SQL queries, and generating insights - things it can do with incredible speed and precision [13]. But when it comes to interpreting vague business problems, handling edge cases, or exercising judgment in unfamiliar situations, AI still has its limits.

The real danger isn't about choosing between AI and humans; it's deploying AI without the right safeguards in place. AI can sometimes "hallucinate" calculations, misinterpret key business logic (like fiscal calendars), or produce results that look accurate but are fundamentally flawed [5]. As Andrew Gelfand, Head of Quant at Balyasny Asset Management, aptly noted:

"AI is becoming so efficient that managers at my level may have less to do... where does my strategy come in if we're all using the same tool?" [13]

This is why a hybrid approach makes the most sense, combining AI's speed and efficiency with the critical oversight and creativity that only humans can provide. It’s about creating a system where AI and human analysts work together, each playing to their strengths.

Querio is designed to strike this balance. With inspectable code, a semantic layer, and live connections to data warehouses, it ensures AI delivers fast and consistent results without sacrificing transparency or accuracy. Rather than replacing human analysts, Querio empowers them, acting as an AI assistant that automates repetitive tasks while leaving the strategic decisions to the experts.

In this new model, analysts step into the role of AI supervisors, validating queries and applying their domain expertise where it counts most [2]. Querio takes care of the grunt work, freeing up analysts to focus on insights that drive real business impact. The result? Faster results, fewer errors, and more time for meaningful analysis.

FAQs

Which analytics tasks can AI safely automate today?

AI excels at automating tasks such as data cleaning, data profiling, report generation, pattern detection, anomaly identification, and natural language querying. These processes take advantage of AI's ability to work with speed, maintain consistency, and handle large-scale operations efficiently. By automating these tasks, organizations can significantly cut down on manual work and improve overall productivity.

How can we prevent AI from changing metric definitions over time?

To maintain consistent metric definitions and prevent unwanted changes by AI, it's important to regularly monitor and validate models. Incorporate updated data into retraining processes to adapt to evolving conditions. Additionally, use methods like data drift and concept drift detection to spot and address shifts early. These proactive measures ensure your metrics remain reliable and aligned over time.

When should a human analyst review AI-generated SQL and results?

A human analyst plays a key role in reviewing AI-generated SQL and its results. Their job is to ensure the queries align with the correct business context, verify accuracy, and catch errors such as hallucinations or translation mistakes. This becomes even more crucial when dealing with complex or multi-table queries, where precision and trust are absolutely essential.

Related Blog Posts