Enterprise Data Management: A Founder's Guide for 2026

A practical guide to enterprise data management. Learn to build a data architecture that ends bottlenecks and enables self-service analytics for faster growth.

published

Outrank AI

enterprise data management, data governance, self-service analytics, data architecture, business intelligence

88c61635-583e-4166-81f4-b93f651f59b0

A familiar pattern shows up once a company starts growing. The product manager wants to know why activation dipped for one cohort. Sales wants pipeline coverage by segment. Finance wants a cleaner revenue view before the board meeting. None of these are exotic requests, yet all of them land in the same queue.

That queue is usually the data team.

At first, this feels manageable. A few dashboards, a few SQL queries, a few ad hoc exports. Then the team becomes a human API. Every answer depends on a small group of people who know where the joins break, which field is reliable, and which dashboard is wrong. Growth slows down not because the company lacks data, but because it can't reliably use the data it already has.

Enterprise data management makes theory practical. It is not corporate overhead. It acts as the operating system that enables a company to move from reactive reporting to trusted, self-service analysis.

Table of Contents

The Data Bottleneck Is Slowing Your Growth

The clearest sign that a company needs enterprise data management isn't a compliance audit or a new warehouse migration. It's delay.

A growth team asks for a funnel breakdown and waits three days. A founder asks whether a pricing test changed expansion behavior and gets three conflicting answers. An analyst spends half the week reconciling CRM records with billing data instead of helping the business make decisions. Everyone is busy, but decision velocity drops.

This bottleneck gets worse as systems multiply. The CRM says one thing. Product events say another. Finance has its own logic. Support exports data into spreadsheets. Before long, no one is debating the strategy. They're debating which number is real.

The cost of running on requests

When this happens, the data team becomes an intake desk for the whole company. That's unsustainable.

A healthy data function shouldn't spend most of its time answering repeat questions or manually stitching together definitions. It should build reliable infrastructure, set standards, and make trusted data easy to use. If you're still fighting siloed systems, this guide on breaking down data silos is a useful companion to the operational issues organizations typically encounter first.

Teams rarely complain about a lack of dashboards. They complain that they still need a specialist to answer simple questions.

The broader market reflects the same pressure. The global enterprise data management market was valued at USD 111.28 billion in 2025 and is projected to reach USD 294.99 billion by 2034, according to Fortune Business Insights on the enterprise data management market. Companies aren't spending on this because it's fashionable. They're spending because fragmented data is expensive.

Enterprise data management is a speed system

The mistake is to treat enterprise data management like a slow, enterprise-only function that matters later. In practice, it matters most when a company is trying to move faster.

If product, finance, and operations can't trust shared definitions, they create local workarounds. Those workarounds become shadow systems. Then even simple reporting turns into archaeology. Good enterprise data management prevents that drift by creating the conditions for speed: clear ownership, consistent definitions, governed access, and data pipelines people don't have to babysit.

That's what turns data from a queue into infrastructure.

What Is Enterprise Data Management Really

Enterprise teams hear "enterprise data management" and picture a giant governance program, a pile of policy documents, and a procurement cycle that never ends. That's the wrong mental model.

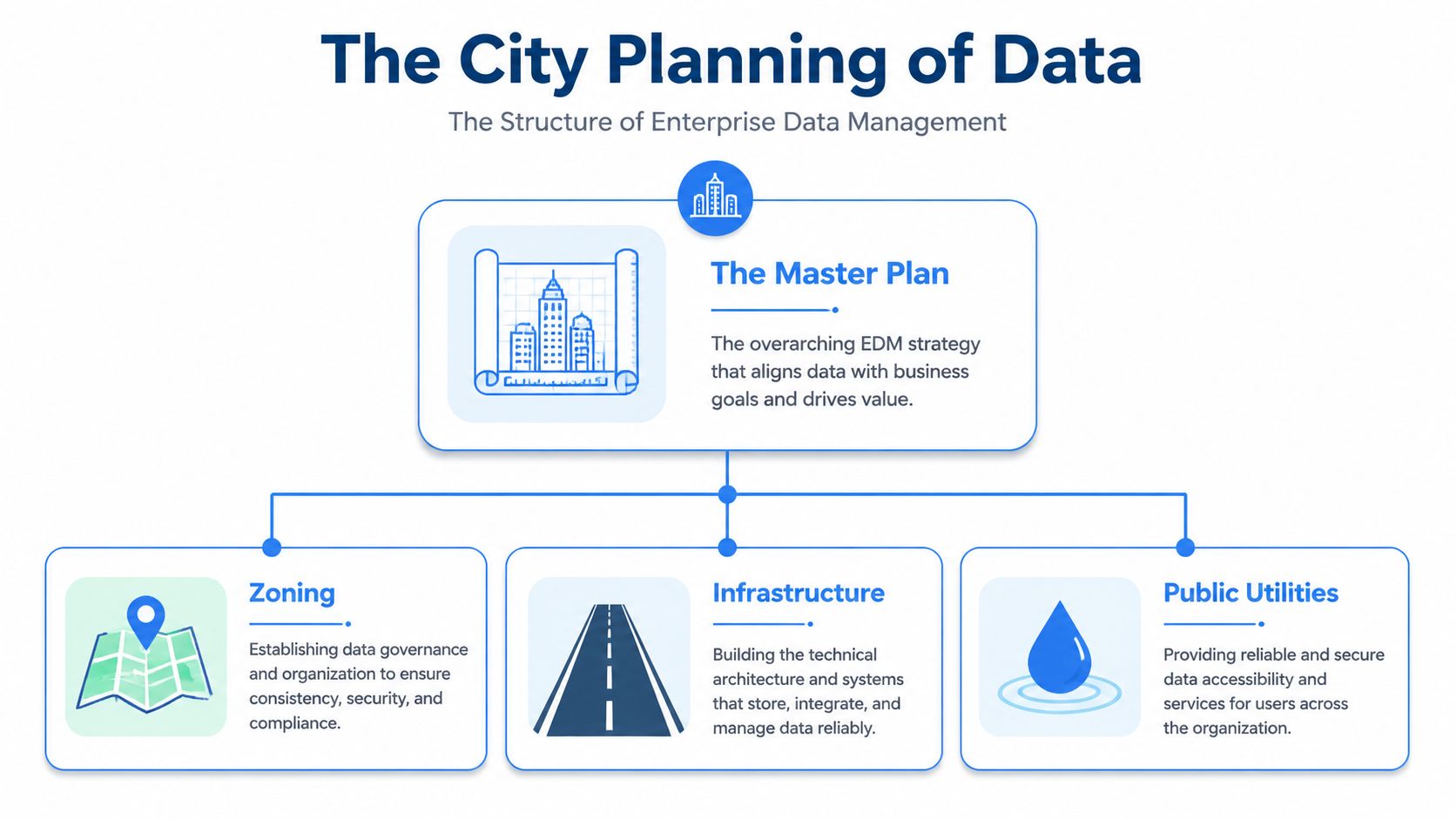

Enterprise data management is better understood as city planning for data. Without planning, a city grows into congestion and confusion. Roads don't connect. Utilities are unreliable. Neighborhoods evolve in isolation. The same thing happens in a company when data grows faster than the systems around it.

Think of it like city planning

You need a master plan, not just more buildings.

In data terms, that means defining how information enters the business, how it's standardized, who can use it, how it stays trustworthy, and how people discover the right version without asking around. A semantic layer often becomes part of that usability layer because it translates technical models into business meaning. If you want that concept in more detail, what a semantic layer does is worth understanding early.

A useful way to think about the analogy:

Zoning controls where things belong. In EDM, that's governance, ownership, naming conventions, and access rules.

Infrastructure keeps the city functioning. In EDM, that's the warehouse, pipelines, integration logic, catalogs, and lineage.

Public utilities make the city usable. In EDM, that's discoverability, documentation, permissioning, and reliable access for non-engineers.

If you skip planning, teams still build. They just build locally. Marketing keeps its own definitions. Sales exports into CSVs. Finance rebuilds core metrics offline. The result isn't flexibility. It's fragmentation.

The core pillars of enterprise data management

Enterprise data management isn't one tool. It's a set of disciplines that have to work together.

Pillar | Business Function |

|---|---|

Governance | Defines rules, ownership, access, and decision rights for data |

Data quality | Prevents bad records, broken joins, and unreliable reporting |

Integration | Connects source systems so teams aren't operating from isolated datasets |

Security | Controls who can see and use sensitive information |

Master data management | Standardizes core entities like customer, product, and account |

Metadata management | Documents definitions, lineage, and business context so data is discoverable |

Lifecycle management | Handles retention, archival, and responsible disposal of data |

Some pillars are visible every day. Data quality shows up when a dashboard is wrong. Integration shows up when a CRM field doesn't match billing. Security shows up when access is too loose or too restrictive.

Other factors are equally significant but often emerge at a later stage. Metadata management and lifecycle management typically appear elective until the team expands, audits become more frequent, or self-service adoption plateaus because nobody knows what a table means.

Practical rule: If a dataset is important enough to drive a decision, it needs an owner, a definition, and a documented path from source to use.

The strongest enterprise data management programs treat these pillars as one system. Governance without integration becomes paperwork. Integration without quality just moves broken data faster. Access without metadata creates clutter instead of self-service.

Good EDM turns raw data into something the business can trust and use.

Choosing Your Data Architecture and Governance Model

Architecture decisions get too much attention in isolation. Teams debate warehouse versus lakehouse, centralized versus federated, or whether they should jump straight to data mesh. Those are real choices, but most failures don't come from picking the wrong shape. They come from building a technical pattern without a governance model that matches how the company operates.

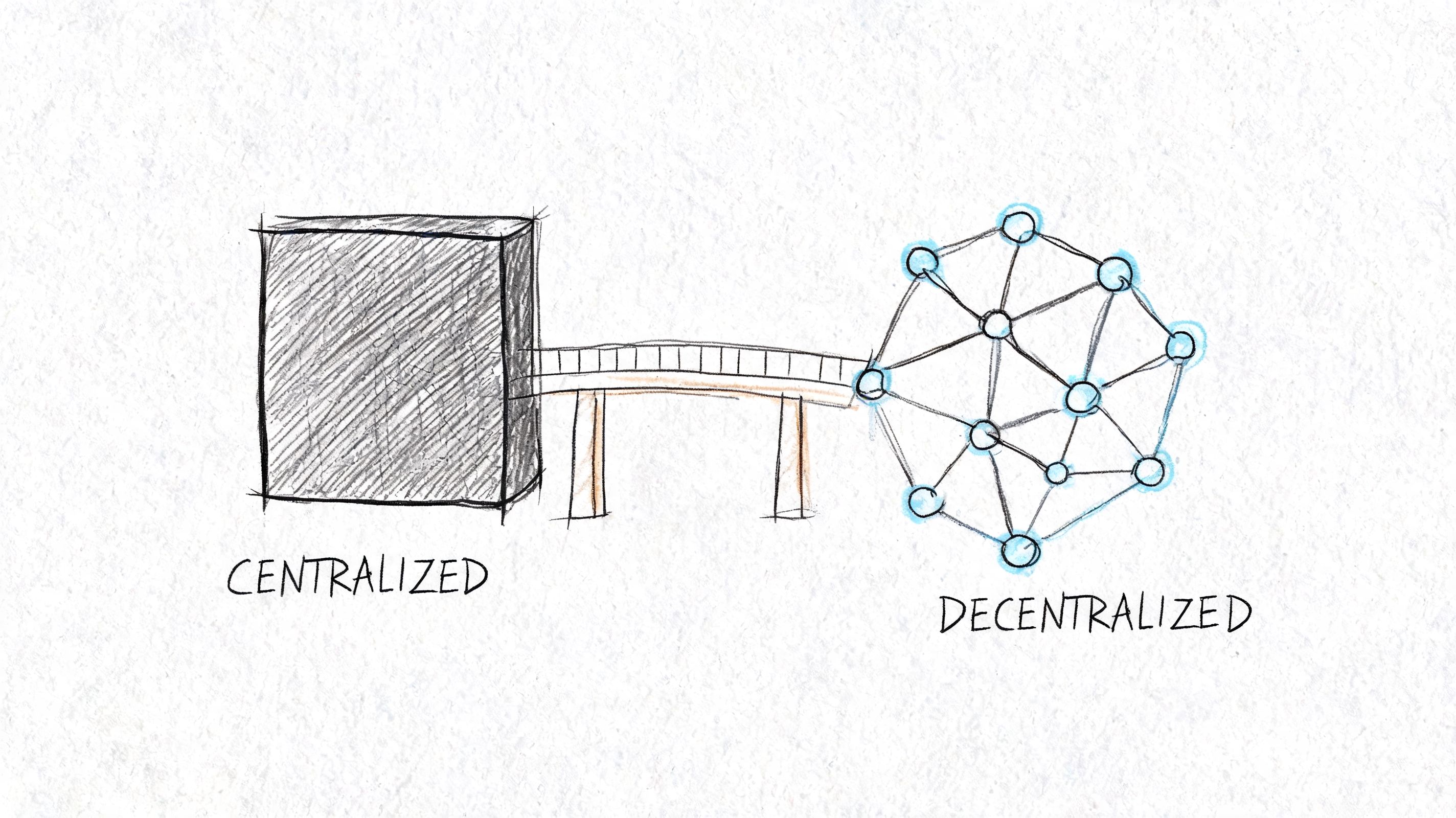

Centralized first usually wins

For most startups and mid-market companies, a centralized model is the right default. Put critical data in one governed platform. Standardize ingestion. Define shared metrics once. Give business teams access to curated, documented datasets.

That doesn't mean every transformation lives with one person forever. It means the company starts from consistency.

A decentralized approach can work when business units are mature, domain teams are capable of owning data products, and governance standards are already strong. Most companies adopt the language of decentralization long before they've earned the discipline it requires. What they call data mesh is often just distributed confusion.

A simple comparison helps:

Model | Where it works | Trade-off |

|---|---|---|

Centralized warehouse or lakehouse | Growing companies that need consistency and faster standardization | Can create backlog if the platform team becomes a gatekeeper |

Federated or domain-owned model | Larger organizations with strong domain accountability | Harder to maintain shared definitions and governance |

Hybrid model | Companies with a strong central foundation and selected domain autonomy | Requires clear interface boundaries and mature stewardship |

Governance fails when nobody owns the data

This is the part most architecture diagrams hide. You can buy a modern stack and still get bad outcomes if no one owns customer, revenue, product, or marketing data in practice.

Data governance is the most common EDM component, with 92% implementation, yet the biggest challenge remains lack of clear ownership, which creates silos and inconsistent stewardship, according to Scoop Market's enterprise data management statistics.

That rings true in real teams. Policies are rarely the blocker. Accountability is.

One team assumes engineering owns the source schema. Engineering assumes analytics owns downstream definitions. Analytics assumes finance signs off on revenue logic. Meanwhile, every dashboard tells a slightly different story. This gets even sharper in regulated industries. Teams working on optimizing bank data for competitive advantage face the same core issue: governance only works when ownership is explicit and operational.

The fastest way to weaken trust in data is to let definitions cross team boundaries without assigning a steward.

A practical ownership model

You don't need a huge governance council to fix this. You need clear roles for critical domains.

Use a model like this:

Domain owner holds business accountability. For example, a VP of Sales owns the meaning of pipeline stages.

Data steward maintains definitions, quality rules, and usage standards for that domain.

Platform team owns the shared infrastructure, ingestion patterns, access controls, and catalog tooling.

Consumers raise issues, request changes, and use governed datasets instead of rebuilding logic locally.

For many teams, this is enough to get started. The key is to keep the scope narrow at first. Pick a few high-value domains like customer, revenue, and product usage. Document approved definitions. Name the owner in writing. Build a lightweight operating rhythm around issue review and schema change management. If you're formalizing that process, this overview of data governance implementation is a practical next step.

What doesn't work is pretending ownership can stay informal once the company passes the early stage. It can't. Data either has a steward, or it decays.

How to Measure Your EDM Program's Success

Most enterprise data management programs get measured the wrong way. Teams report table counts, dashboard counts, pipeline counts, or ticket closure rates. Those metrics can be useful for operators, but they don't tell leadership whether the system is helping the business move faster or operate more efficiently.

If your EDM program creates more assets but the company still waits on analysts for basic answers, the program isn't working.

Stop reporting vanity metrics

A full data catalog sounds good. So does a long list of governed datasets. Neither matters if business users don't trust the outputs.

The best measurement framework starts with friction. How long does it take to answer a new business question? How often does a team have to manually verify a report before using it? How much analyst time goes into cleanup that should have been handled upstream?

A mature data function should reduce waiting, rework, and argument. If those aren't improving, your metrics are too shallow.

This is also where executives often underestimate the payoff. Organizations with mature data governance achieve an average 24.1% revenue uplift and 25.4% cost savings, as noted by Integrate.io on enterprise data management tools and strategy. Those numbers matter because they connect governance to operating outcomes, not just compliance.

In environments where provenance and auditability matter, adjacent patterns from secure systems can also be informative. For leaders evaluating tamper-resistant workflows or shared verification models, an enterprise blockchain development company can offer a useful reference point for how traceability gets designed into systems from the start.

Use a business scorecard instead

A better EDM scorecard looks something like this:

Time to insight measures how long it takes to answer a new question from request to trusted output.

Data trust score tracks how often teams can use a report or dataset without manual reconciliation.

Manual effort removed captures the hours no longer spent cleaning exports, repairing joins, or rebuilding logic in spreadsheets.

Decision adoption looks at whether business teams are using governed datasets in actual planning, not bypassing them.

Incident recovery quality evaluates how quickly teams can find the source of a break and restore confidence.

For product and growth teams, tie these to decisions they already care about. Release reviews, funnel diagnostics, pricing analysis, forecast updates, board metrics. If EDM doesn't make those workflows easier, it won't win durable support.

It also helps to define KPI ownership outside the data team. A strong metric framework isn't just a dashboard for the Head of Data. Finance should care about reporting consistency. Product should care about faster experimentation analysis. Operations should care about fewer manual handoffs. If you need a clean baseline for KPI design, this guide to understanding key performance indicators is a practical place to start.

Measuring enterprise data management's impact on operational drag is essential. That is the metric investors and operators care about most.

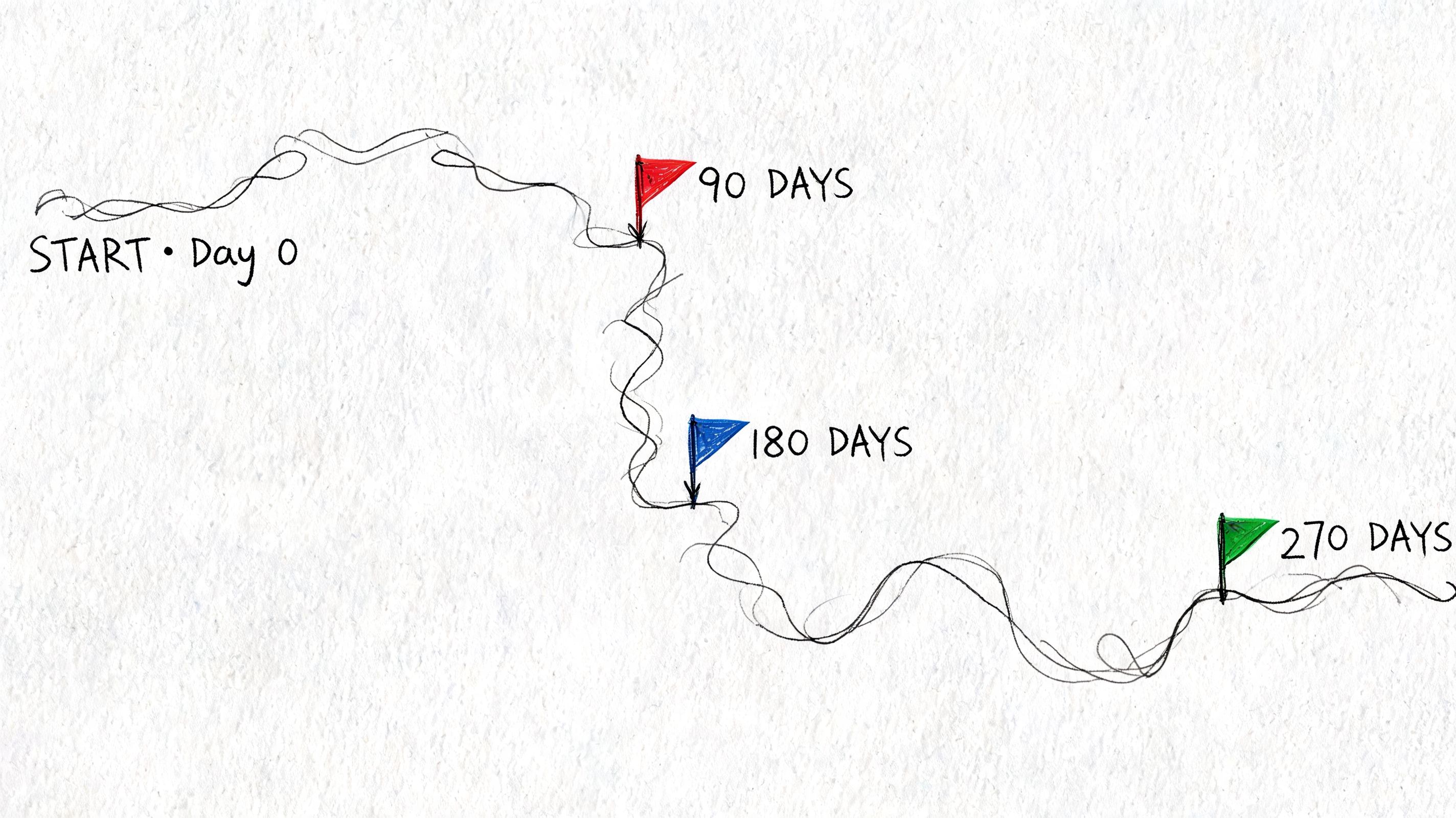

An Agile Roadmap for EDM Implementation

Large companies often treat enterprise data management like a transformation program that has to be designed in full before anything ships. That approach usually creates a lot of meetings, a long architecture document, and very little trust.

A better model is phased delivery. Build one useful, governed slice at a time. Prove reliability. Expand from there.

Days 1 to 90

Start with a domain that matters to the business and breaks often enough that improvement will be visible. Sales pipeline, revenue, and product activation are common candidates.

The first sprint should establish a narrow but real foundation:

Pick one domain

Choose a domain with direct business relevance and obvious pain. Don't start with "all customer data." Start with a specific decision surface, like qualified pipeline or signup activation.Name the owner

Assign a business owner and a steward before you model anything. If ownership is unresolved, the project will stall the first time definitions conflict.Standardize source logic

Map the systems involved, define the approved fields, and remove duplicate or unofficial logic paths.Set quality checks

Add validation at ingestion and transformation points. Catch drift early instead of after executives see the dashboard.

What to build first

After the initial domain is stable, add the infrastructure that makes self-service safe.

A practical sequence looks like this:

Catalog the dataset so people can find it and understand what it's for.

Document business definitions for every critical metric and dimension.

Apply role-based access so usage expands without exposing everything to everyone.

Instrument lineage from source to final report.

Publish curated outputs for analysts and business users, not raw source tables disguised as self-service.

Automated data lineage tracking is a critical step because it lets teams trace data from origin to final report. That visibility reduces audit times by 40% to 60% and is essential for trust in self-service analytics, according to Databricks on building an enterprise data management strategy.

When a metric breaks, lineage tells you whether the problem started in the source system, the transformation logic, or the reporting layer. Without that, every incident turns into a manual investigation.

For tooling, the specific vendors matter less than the operating pattern. Teams often use warehouse-native transformations, orchestration, a metadata catalog, alerting for schema or quality drift, and documented access workflows. The winning setup is usually the one that the team can maintain consistently.

What usually goes wrong

Most failed rollouts share the same pattern:

Scope grows too fast and the team tries to govern every domain at once.

Definitions stay unresolved because nobody wants to force a business decision.

Raw access becomes the default so users keep building their own versions anyway.

Documentation is written once and never updated when schemas change.

Keep the cadence short. Ship a domain. Learn where adoption stalls. Fix the bottlenecks. Then expand to the next domain.

That's how enterprise data management becomes an operating capability instead of a stalled initiative.

From Traditional BI to True Self-Service Analytics

Traditional BI solved an important problem. It centralized reporting and gave companies a shared view of performance. But it also created a pattern that many teams still haven't escaped: business users consume finished dashboards, then return to the data team the moment they need a new cut, a new metric, or a different level of detail.

That model doesn't scale well in fast-moving companies.

Why dashboards stop being enough

Dashboards are good at recurring questions. They are weak at emerging questions.

A founder doesn't always know in advance which slice of churn will matter next month. A product leader investigating activation needs to pivot from one behavior to another without waiting for a new ticket. A growth team wants to test a hypothesis, not wait in line for a dashboard revision.

Many organizations confuse access with self-service. Giving users a BI tool isn't enough if the data is poorly modeled, definitions are inconsistent, or every non-standard question still requires analyst intervention. That's still a queue. It's just a prettier one.

The goal isn't more dashboards. The goal is to let more people answer good questions safely on top of trusted data.

What the next model looks like

True self-service analytics sits on top of strong enterprise data management. The governed layer stays centralized. Quality, lineage, ownership, and access rules remain intact. But the consumption layer becomes far more flexible.

That shift changes the role of the data team. Instead of serving endless one-off requests, the team maintains the platform, curates trusted datasets, and enables exploration. Analysts spend less time pulling numbers and more time improving the system and tackling higher-value analysis.

This is also why older BI categories are starting to feel limiting for modern teams. Static dashboards and tightly controlled semantic models still matter, but they aren't enough for product exploration, growth diagnostics, operational analysis, or customer-facing data experiences. Teams increasingly want notebook-style workflows, direct warehouse access with guardrails, reusable logic, and interfaces that support both technical and non-technical users.

When enterprise data management is done well, self-service stops being a slogan. It becomes a practical operating model. People can explore, test, and build without breaking trust. The data team stops being a bottleneck. The business moves faster because the infrastructure is finally doing its job.

If your team is stuck acting as a human API, Querio is built for the next step. It deploys AI coding agents directly on your data warehouse and gives teams a file system approach with custom Python notebooks, so technical and non-technical users can explore trusted data without waiting on an analyst queue. For mid-market companies moving beyond traditional BI, it helps turn enterprise data management into real self-service infrastructure.