Top 10 Data Warehouse Automation Tools for 2026

Explore the top 10 data warehouse automation tools for 2026. Compare features, pros, cons, and pricing to find the right DWA solution for your data team.

published

Outrank AI

data warehouse automation, dwa tools, elt tools, data engineering, querio

79df38be-7008-41a4-8cf8-f48688268ae8

Your data warehouse is built. You’ve migrated to Snowflake, BigQuery, or Databricks, and the hard platform decision is behind you. But the team still feels stuck. Analysts are fielding ad hoc requests all day, engineers are patching pipelines instead of building durable models, and business users still wait too long for basic answers.

That’s the point where many organizations start looking at data warehouse automation tools. The promise is appealing: less hand-coded plumbing, faster delivery, cleaner lineage, and fewer broken dependencies. The problem is that the category is crowded, and a lot of vendor messaging blurs together.

The market momentum is real. The data warehouse automation market is projected at USD 4.45 billion in 2025 and USD 19.80 billion by 2035, with a 16.15% CAGR. But buyers don’t need another market overview. They need to know which tools fit a lean analytics team, which ones fit an enterprise governance program, and which ones actually reduce the “human API” burden instead of moving it around.

This guide gets to that quickly. It groups the best options by what they do well, from end-to-end automation suites to transformation-first platforms to AI-first analytics layers. If you’re also evaluating adjacent infrastructure, this companion list of top data pipeline tools is worth keeping open.

Table of Contents

1. Querio

Querio is the tool on this list that feels least like traditional data warehouse automation software and most like an answer to the backlog problem that shows up after the warehouse is already in place. Instead of focusing only on pipeline generation or warehouse modeling, it puts AI coding agents and reactive Python notebooks directly on top of the warehouse so teams can work in code, ask questions in natural language, and keep the resulting logic explicit and reusable.

That distinction matters. A lot of platforms automate warehouse construction but still leave business users dependent on analysts for every new slice, metric, or exploratory thread. Querio goes after that last-mile bottleneck. It’s especially relevant for mid-market teams where a small data group supports too many stakeholders and too many systems.

The category shift is timely. One market summary notes that mid-sized operations often juggle 24+ tools on average, which is exactly the environment where another dashboard layer usually makes things worse instead of better.

Why Querio stands out

Querio’s core strength is that every AI-generated answer resolves into readable SQL or Python, stored as files and versioned in Git. That makes the output auditable. It also makes it easier for engineering-minded teams to trust, review, and improve what non-technical users start.

A second practical advantage is breadth of warehouse support. Querio connects directly to BigQuery, Snowflake, Redshift, ClickHouse, PostgreSQL, MySQL, MariaDB, Microsoft SQL Server, and MotherDuck. If you want context on the underlying platform layer, Querio’s guide to what a data warehouse is is a solid primer for non-specialists joining the evaluation.

Practical rule: If your biggest problem is analysts acting as ticket routers, not pipeline builders, start with an AI-first analytics layer before you buy a heavier warehouse automation suite.

What works well in practice:

Self-service that stays inspectable: Users can ask questions naturally, but the system produces code rather than black-box answers.

Git-based operating model: Logic lives as files, which fits teams that already review code and care about version history.

Embedding options: Internal analytics and customer-facing data experiences are both in scope through Querio.

What doesn’t:

Notebook workflows aren’t for everyone: Teams that want only drag-and-drop BI may need time to adjust.

Detailed pricing isn’t fully public: You can start free, but enterprise packaging is still a sales conversation.

2. WhereScape

WhereScape is one of the most established names in data warehouse automation tools, and that maturity shows in both the upside and the trade-offs. It’s built for teams that want metadata-driven automation across design, development, deployment, and operations, with platform-native code generation and a lot of governance discipline baked in.

If you’re re-platforming an older warehouse or standing up a governed analytics environment fast, WhereScape still makes a strong case. The same market research that tracks overall category growth notes that tools like WhereScape automate the full lifecycle and can reduce build times from months to weeks through metadata-driven generation across 10+ cloud platforms.

Where it fits best

WhereScape tends to shine when a team needs a broad operating system for warehouse delivery, not just a transformation layer. It supports Snowflake, Databricks, Microsoft Fabric, SQL Server, Oracle, BigQuery, and more, which makes it useful in mixed estates and migration projects.

Its strongest fit is usually a data team that already knows its target architecture. If you’ve already agreed on modeling standards and governance patterns, WhereScape can accelerate execution. If you’re still debating those choices, the platform can feel bigger than the immediate problem.

For teams comparing dimensional and governed warehouse patterns, Querio’s write-up on data warehouse models is a useful companion read.

WhereScape is best when speed and control matter at the same time. It’s less attractive when a small team only needs lightweight transformation workflows.

The main drawbacks are familiar. Pricing is sales-led, and the breadth can feel heavy for lean startups. But for mature teams with platform complexity, WhereScape remains one of the clearest end-to-end options.

3. TimeXtender

TimeXtender sits in a useful middle ground. It’s broad enough to cover ingestion, modeling, and transformation, but it doesn’t always feel as heavyweight as some enterprise-first suites. That makes it appealing to teams that want a “one tool” approach without assembling multiple point solutions right away.

Its metadata-driven approach is familiar if you’ve used classic warehouse automation products before. The platform also keeps one foot in cloud deployments and another in on-prem SQL Server environments, which still matters for a lot of companies with hybrid estates.

What works and what to watch

TimeXtender is a practical fit when the warehouse team wants automation plus a controlled path for gradual modernization. If some workloads stay on-prem while others move to cloud, the product’s split between cloud packages and Classic edition can be a feature rather than a limitation.

That said, the edition split can also create confusion. Feature parity isn’t always uniform, so teams need to map their target state early instead of assuming every capability travels cleanly between packaging models.

A lot of buyers in this category struggle less with feature comparison and more with cost clarity. One market analysis points to a persistent gap around pricing opacity, variable implementation costs, and ROI uncertainty for mid-market companies evaluating data warehouse automation adoption. TimeXtender isn’t alone there, but it’s part of the same buying pattern.

For teams connecting warehouse automation to downstream reporting, Querio’s article on business intelligence automation helps frame where warehouse tooling ends and analytics enablement begins.

Useful summary:

Best fit: Hybrid estates, especially SQL Server-heavy organizations.

Strength: Unified metadata-driven approach across warehouse layers.

Watchout: Sales-led pricing and edition differences require careful scoping.

If that aligns with your environment, TimeXtender is a credible all-in-one candidate.

4. Coalesce

Coalesce is less about automating every layer of the warehouse lifecycle and more about making transformation development structured, reusable, and governable. If your team’s real pain is ELT sprawl, duplicated SQL patterns, and fragile dependency chains, Coalesce is usually easier to justify than a larger end-to-end platform.

It has been especially attractive for Snowflake-first teams because the product grew up around that environment. The experience reflects that focus. Things like node templates, lineage, and environment promotion feel built for day-to-day warehouse development rather than just executive demos.

Best for transformation-heavy teams

Coalesce works well when you want visual development without abandoning engineering discipline. Auto-generated SQL, Git integration, column-level lineage, and reusable nodes all help teams standardize common patterns while still leaving room for custom logic where needed.

That makes it a strong tool for mixed-skill teams. Analytics engineers can move quickly, junior team members can follow established patterns, and platform owners can enforce a cleaner operating model than ad hoc hand-built SQL trees.

One reason these transformation specialists have grown in importance is deployment preference. In category-level market data, cloud deployments accounted for about 67% of the market in 2025, which lines up with the environments where Coalesce tends to feel most natural.

If you’re sorting out where a tool like Coalesce belongs in a broader architecture, Querio’s breakdown of the modern data stack is a helpful reference.

Don’t buy Coalesce if you want a full ingestion-to-BI replacement. Buy it if transformation complexity is your bottleneck.

The main caveat is scope. Support beyond Snowflake has expanded, but Snowflake still feels like the center of gravity. Teams that are extensively multi-engine should validate the fit carefully before committing to Coalesce.

5. Matillion Data Productivity Cloud DPC

Matillion remains one of the more recognizable names for teams that want ingestion, transformation, and orchestration in one cloud-native package. Its pitch is straightforward: design pipelines in a low-code environment, push compute down into the warehouse, and manage the lifecycle from one place.

That simplicity is the appeal. For teams that don’t want to wire together separate ingestion, transformation, and orchestration products, Matillion can reduce coordination overhead fast.

Where Matillion makes sense

The platform fits best when broad connector coverage matters as much as transformation logic. If your warehouse team spends too much time normalizing feeds from SaaS systems, internal databases, and operational apps, Matillion’s integrated approach can save a lot of setup work.

The new AI-assistant direction with Maia is also worth watching. It reflects a broader shift in the category toward AI-supported pipeline creation and management. But buyers should separate “assistant” value from the more important question: can the platform make your operating model simpler six months after implementation?

There’s also a cost angle to evaluate carefully. Consumption pricing can be fine when workloads are predictable. It becomes harder to forecast when data volume, developer activity, and business demand all move at once.

A practical lens for Matillion:

Use it when you want one platform for connectors, ELT, and orchestration.

Avoid overcommitting when cost predictability is a top buying criterion.

Validate early when your team expects heavy AI-assisted workflow creation.

Matillion is often a strong fit for organizations that want fast platform coverage. It’s a weaker fit for teams that already have preferred tools for part of the stack and only need best-of-breed automation in one layer.

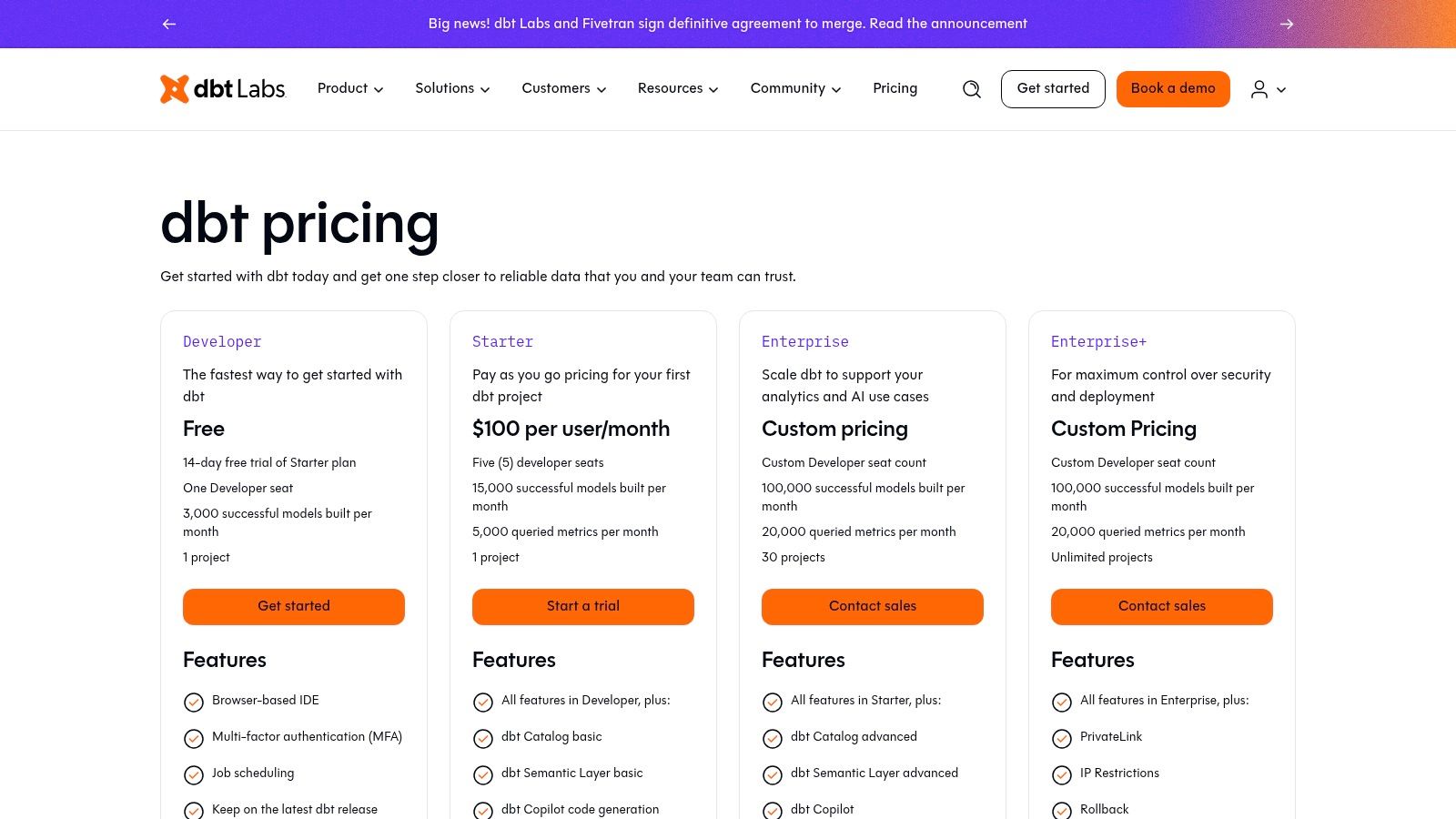

6. dbt Cloud dbt Labs

dbt Cloud isn’t a traditional all-in-one data warehouse automation platform, but it has become the default transformation standard for a huge share of modern data teams. If your warehouse is already in place and you want reliable SQL-based modeling, testing, documentation, and CI/CD, dbt Cloud is often the most obvious starting point.

Its biggest strength is opinionation. Teams don’t just buy a tool. They adopt a way of building analytics that is testable, versioned, and much easier to review.

Why teams standardize on dbt

dbt Cloud works because it narrows the surface area of warehouse development to something maintainable. Models are code. Tests are explicit. Documentation artifacts are generated from the work itself. For growing teams, that structure is often more valuable than flashy automation claims.

It’s also helped by the wider category trend toward software-led buying. In 2025, software represented about 63% share of the data warehouse automation market, which fits the rise of tools that make warehouse work feel closer to application development.

That said, dbt Cloud isn’t the right answer for every team. If the business wants no-code self-service or expects warehouse automation to handle ingestion and orchestration fully, dbt alone won’t satisfy that requirement. Some organizations also prefer dbt Core to keep cost and hosting control in-house.

dbt Cloud is strongest when your team already thinks in Git, pull requests, tests, and deployment environments.

For many companies, dbt Cloud pricing and packaging is still worth the premium because it reduces the operational burden of managing the stack yourself. But it’s best viewed as the transformation backbone, not the whole warehouse automation story.

7. VaultSpeed

VaultSpeed is specialized, and that’s exactly why some teams love it. If you’ve committed to Data Vault 2.0 for a governed, auditable, multi-source warehouse, VaultSpeed gives you automation that matches that architecture instead of forcing general-purpose tooling into a specialized job.

It’s not trying to be the easiest tool on this list. It’s trying to make a complex warehouse pattern manageable.

When Data Vault is the right call

VaultSpeed earns its place in environments where source systems change often, lineage matters, and schema drift can’t become a weekly fire drill. Its no-code or low-code approach to Data Vault 2.0 is designed to accelerate model generation and support CI/CD in modern cloud environments.

That specialization lines up with broader enterprise use cases in the category. One industry summary notes that large enterprises accounted for about 66% share in 2025, which helps explain why highly governed automation products continue to find strong demand.

Where teams go wrong is choosing Data Vault because it sounds enterprise-grade, not because they actually need it. If your use case is straightforward reporting marts with stable source data, VaultSpeed can be more architecture than value.

Short version:

Strong fit: Regulated, evolving, multi-source warehouse programs.

Weak fit: Small teams building simple dimensional marts.

Real benefit: Faster generation of governed warehouse structures with less repetitive modeling work.

If that’s your world, VaultSpeed is one of the sharper tools available.

8. Datavault Builder

Datavault Builder addresses a similar buyer as VaultSpeed, but the feel is a bit different. It presents itself more as an end-to-end Data Vault lifecycle platform, covering modeling, ELT code generation, deployment, documentation, lineage, and operations in one system.

That can be a major advantage for teams that don’t want to stitch together governance, automation, and deployment mechanics across several products.

What it does well

The best use case is a warehouse program that has already settled on Data Vault and now wants repeatability across the full delivery lifecycle. Datavault Builder supports a wide range of warehouses, including Snowflake, Databricks, BigQuery, Fabric or Synapse, SQL Server, Oracle, and PostgreSQL.

It’s also useful for organizations that want dbt-compatible outputs without abandoning a more model-driven warehouse automation approach. That hybrid option can help teams bridge established warehouse practices and newer analytics engineering conventions.

One category snapshot notes that data integration applications represented 31% share, which is a reminder that warehouse automation buyers still care significantly about reliable movement and structuring of data, not just semantic access on top.

“Choose Datavault Builder when your architecture decision is already made. Don’t use it to postpone making one.”

The limits are predictable. If you don’t want Data Vault, most of the product’s value disappears. And like many enterprise-grade tools, pricing is not public. Still, for the right architecture, Datavault Builder can remove a lot of manual warehouse delivery work.

9. DataOps.live

DataOps.live is a good reminder that warehouse automation isn’t only about generating pipelines or models. Sometimes the biggest pain is delivery discipline. Environments drift, releases are brittle, testing is inconsistent, and every deployment feels riskier than it should.

That’s the problem DataOps.live tackles, especially for Snowflake-centered teams.

Best for warehouse delivery discipline

If your organization already has code, models, and transformations in place but struggles to move them safely across development, test, and production, DataOps.live can be more valuable than another modeling tool. It applies DevOps and CI/CD patterns directly to data products and warehouse workflows.

This matters more as cloud warehouse usage deepens. North America’s position in the category is tied in part to early cloud adoption, and the U.S. market alone is projected to reach USD 5.32 billion by 2035 at a 15.91% CAGR. Tools focused on operational rigor are part of that maturation curve.

A simple way to think about DataOps.live:

Buy it for: Governed deployments, branching, testing, and release automation in Snowflake-first programs.

Don’t buy it for: Broad multi-warehouse automation needs outside the Snowflake ecosystem.

Expect value when: Your data team behaves more like a product engineering team than a reporting support function.

For Snowflake-native organizations, it can bring needed process control. For everyone else, it may be too narrow.

10. Informatica Intelligent Data Management Cloud IDMC Cloud Data Integration

Informatica sits at the enterprise end of this market. For some teams, that means “too much platform.” For others, it means “finally, one vendor that can handle the ugly parts too.” The difference usually comes down to complexity, regulation, and scale.

Its Cloud Data Integration capabilities are broad. You’re not just getting pipeline automation. You’re getting a larger data management environment with governance, cataloging, quality, and operational tooling attached.

Where Informatica earns its keep

Informatica makes the most sense in organizations where warehouse automation is only one requirement inside a larger control framework. If security teams, governance leads, and platform owners all need a seat at the table, the breadth can be worth the overhead.

It also maps well to industry segments with heavy compliance and integration needs. In one market breakdown, BFSI represented 24% share of end-use demand, which aligns with the kinds of environments where Informatica traditionally performs well.

The weakness is just as clear. Smaller teams usually won’t use enough of the suite to justify its complexity. Licensing can also get hard to model because multiple service meters may affect the final bill.

A practical view of Informatica:

Strong fit: Regulated enterprises with broad integration and governance requirements.

Poor fit: Lean startups that mainly need fast warehouse modeling or lightweight analytics enablement.

Real trade-off: High capability, high complexity.

If your warehouse sits inside a larger enterprise data operating model, Informatica stays relevant for a reason.

Top 10 Data Warehouse Automation Tools: Feature Comparison

Product | Core capability | Target audience | Key strengths (USP / Value) | Pricing / Cost visibility |

|---|---|---|---|---|

Querio (recommended) | AI-driven, code-first analytics on your warehouse; reactive Python notebooks + AI agents; Git file system | Mid-market teams needing fast, self-serve analytics for technical & non-technical users | Transparent, versioned SQL/Python answers; 9 warehouse integrations; embeddable boards; big time/cost savings claims | Free starter + demo-based enterprise pricing; quotes on request |

WhereScape | Metadata-driven automation for ELT/ETL, modeling and deployment | Enterprises doing new warehouse builds or re-platforming | Generates platform-native code; full lineage, templates for migrations; strong governance | Sales-led; no public list price |

TimeXtender | Model-driven automation (ingest → warehouse → transforms) via Unified Metadata Framework | Hybrid or on-prem SQL Server estates and cloud projects | End-to-end toolset from ingestion to warehouse; rapid build-outs; CI/CD support | Sales-led; pricing opaque |

Coalesce | Visual, node-based ELT and transformation (Snowflake-native) | Snowflake-first teams and mixed-skill data teams | Visual templates, dependency graph, column-level lineage, Git integration | Sales-led; demo usually required |

Matillion DPC | Cloud-native ingestion, orchestration and pushdown ELT with agent ('Maia') | Teams needing broad connectors and cloud pushdown performance | Wide connector coverage; pushdown architecture; agent-assisted pipeline building | Consumption / credit-based tiers; free trial; forecasting can be complex |

dbt Cloud | SQL-first, testable transformations with managed IDE, scheduler and semantic layer | Analytics-engineering teams focused on software-engineering practices | Large ecosystem; testing & docs by default; dbt Copilot/semantic features | Seat + usage pricing; can scale up costs |

VaultSpeed | Data Vault 2.0 automation for end-to-end pipelines and models | Complex, multi-source enterprise environments adopting Data Vault | Automated Data Vault modeling, delta/schema-drift handling, auditable pipelines | Sales-led enterprise contracts; pricing not public |

Datavault Builder | End-to-end Data Vault automation with dbt-compatible code options | Organizations standardizing on Data Vault for governance-scale warehouses | Full lifecycle coverage, CI/CD patterns, strong Snowflake support | Edition/license packs; pricing not public |

DataOps.live | DevOps-style CI/CD and environment automation for Snowflake data products | Snowflake-first teams standardizing engineering rigor in data delivery | Branching, testing, governed deployments; Snowflake Native Apps support | Credits-based starter; larger scale pricing sales-led |

Informatica IDMC (Cloud Data Integration) | Enterprise AI-assisted data management: ingestion, ELT, cataloging, data quality | Large regulated enterprises needing end-to-end governance at scale | Extensive connectors; integrated data quality, catalog and governance features | Consumption (IPU/compute)-based licensing; complex packaging |

Automate the Warehouse, Not Your Thinking

Data warehouse automation tools are valuable because they remove repetitive work, standardize delivery, and make it easier to scale a data platform without scaling manual effort at the same rate. But the tool itself isn’t the strategy. That’s the mistake teams make when they buy for feature breadth instead of operational need.

A useful starting point is to identify the bottleneck. If the pain lives in warehouse build-out, metadata-driven platforms like WhereScape or TimeXtender can help. If the pain lives in transformation sprawl, Coalesce or dbt Cloud may be the better fit. If the issue is governed enterprise modeling, VaultSpeed or Datavault Builder can make more sense than general-purpose tools. And if the warehouse is already built but the business still can’t get answers fast enough, an AI-first layer like Querio may solve more than another ETL purchase.

The market is still moving quickly. One category overview notes that software continues to dominate the space while cloud deployments lead adoption, and the fastest growth is increasingly coming from smaller and mid-sized companies trying to scale analytics without bloating the team. That tracks with what many data leaders are seeing firsthand. The buyer today isn’t always a central enterprise architecture office. It’s often a lean data lead trying to build self-service without sacrificing control.

There’s also a real shift happening around AI-native access patterns. Traditional data warehouse automation focused on code generation, metadata, and lifecycle management. That still matters. But newer approaches are pushing closer to the business user, especially where AI agents can work directly on the warehouse and generate explicit analysis artifacts instead of static dashboards. That doesn’t replace core warehouse engineering. It changes where self-service becomes practical.

The most important trade-off is simple. Automation helps when your processes are already pointed in the right direction. It hurts when it lets you industrialize unclear ownership, bad models, or sloppy metric definitions. The best teams use automation to enforce standards, not to avoid making decisions.

If you’re narrowing options, run a proof of concept around one painful workflow. Rebuilding one source-to-model pipeline, standing up one governed domain, or removing one recurring analyst queue will tell you more than any feature matrix. If you want a broader lens on where AI-powered operations are heading, this roundup of Cyndra's AI tools insights is a useful companion read.

Choose the tool that best removes your current bottleneck, not the one with the longest enterprise slide deck. That’s usually the fastest path to a warehouse that people can use.

If your warehouse is already in place but your team is still acting like a reporting help desk, Querio is worth a close look. It gives business users AI-assisted, code-first access to the warehouse while keeping outputs transparent, versioned, and reusable, so your data team can stop being the human API and start building scalable self-service infrastructure.