OLTP vs OLAP The Definitive Guide for Modern Data Teams

Struggling with OLTP vs OLAP? This guide clarifies the core differences, architectures, and use cases to help you build the right data strategy for growth.

published

Outrank AI

oltp vs olap, data architecture, business intelligence, database systems, htap

31e35ec1-be2a-4e73-accd-0a15b8e91325

When you boil it down, the OLTP vs. OLAP distinction is about two different jobs. OLTP systems run the business—think processing thousands of tiny, real-time transactions like a credit card swipe or an inventory update. On the other hand, OLAP systems are built to analyze the business, running complex queries against huge historical datasets to spot trends and guide big decisions. One is all about operational speed; the other is for strategic insight.

Understanding The Core Difference Between OLTP And OLAP

Let's use a practical example. Picture a busy e-commerce site on Black Friday. The system processing your order, confirming payment, and updating inventory in milliseconds is an OLTP (Online Transaction Processing) system. Its entire purpose is to handle a massive number of small, fast, and concurrent transactions without a single failure. The priorities here are speed, reliability, and data integrity to keep the business running smoothly.

Now, think about the marketing team planning next year's Black Friday sale. They're using an OLAP (Online Analytical Processing) system to ask tough questions like, "Which marketing channels drove the highest-value customers in the last three years?" or "What's the correlation between a specific discount and repeat purchases?" This system isn't touching live orders; it’s sifting through terabytes of historical data to uncover patterns that inform strategy.

Quick Look OLTP vs OLAP At A Glance

These two systems are built on fundamentally different architectures because they serve opposing needs—one is designed to capture data, while the other is meant to explore it. This division influences everything from database design to the kinds of queries they can handle efficiently.

For a clearer picture, here’s a quick summary of their key differences.

Attribute | OLTP (Online Transaction Processing) | OLAP (Online Analytical Processing) |

|---|---|---|

Primary Goal | Run day-to-day business operations | Support business intelligence and decision-making |

Data Focus | Current, real-time transactional data | Historical, aggregated, and multidimensional data |

Typical Users | Front-line workers, customer-facing applications | Data analysts, business managers, executives |

Workload | Short, simple read/write transactions | Complex, read-heavy analytical queries |

Data Model | Highly normalized (3NF) to ensure integrity | Denormalized (Star/Snowflake Schema) for query speed |

This table shows why trying to force one system to do the job of the other rarely works out well. They are specialized tools for very different problems.

The market for both reflects just how essential they are. The global OLTP market reached USD 35.7 billion in 2024 and is expected to climb to USD 83.8 billion by 2033, fueled by the relentless demand for instant processing in cloud and AI applications. At the same time, the OLAP market, valued at USD 18,500.75 million, is projected to hit USD 37,125.40 million by 2032 as companies rely more heavily on deep data analysis. You can find more details in this OLAP market research.

At its core, the OLTP vs OLAP choice isn't just a technical decision—it's a strategic one. You're choosing between optimizing for operational efficiency or analytical depth, and the right architecture depends entirely on the business problem you're trying to solve.

The underlying database structure is a massive part of this equation. For OLAP, this is typically a data warehouse designed for fast analysis. To better understand this critical component, check out our guide on the modern warehouse.

Comparing OLTP And OLAP System Architectures

To really get the difference between OLTP and OLAP, you have to look under the hood at their core architectures. These systems aren't just configured differently; they're built from the ground up on opposing philosophies to serve completely different purposes. One is engineered for speed and precision in the heat of the moment, while the other is built for deep, reflective analysis over vast timelines.

This divide starts with the data model itself. OLTP systems almost universally use a highly normalized data model, often following the Third Normal Form (3NF). Normalization is all about eliminating data redundancy to maintain integrity. Think about an e-commerce checkout: your customer info, order details, and product data are all kept in separate, linked tables. This way, if you update your shipping address, it only changes in one spot, preventing messy inconsistencies.

OLAP systems do the exact opposite. They are designed around denormalized data models, with the star or snowflake schema being the most common. In these setups, data is deliberately repeated and grouped into massive, wide "fact" tables surrounded by smaller "dimension" tables that add context. This structure makes complex queries blazing fast because the database doesn't have to stitch together a dozen tables just to answer a question like "what were our quarterly sales by region?".

The Data Schema Showdown

The choice between normalization and denormalization is the fundamental trade-off between write speed and read speed. It's the first major fork in the road that separates OLTP from OLAP.

OLTP Normalization (3NF): This model is a finely tuned machine for high-throughput write operations (INSERT, UPDATE, DELETE). By keeping data in lots of small, specific tables, it makes sure every transaction is lightning-fast and atomic, protecting data integrity above all else. The trade-off? Analytical queries that need to pull from many tables become slow and unwieldy.

OLAP Denormalization (Star Schema): This model is all about optimizing for read-heavy, complex queries. By pre-joining data into fewer, wider tables, it dramatically speeds up the massive scans needed for business intelligence. The compromise here is that loading data is more complicated, and data redundancy is simply the price you pay for performance.

The core architectural principle is this: OLTP prioritizes data integrity and write performance to run the business, while OLAP prioritizes query performance and read efficiency to analyze the business. This single difference dictates nearly every other design choice.

Transaction Types And Concurrency Needs

Another crucial architectural split is the kind of transactions each system is built to handle. OLTP systems are on the front lines, managing a huge volume of concurrent users performing short, simple transactions. Imagine thousands of people adding items to a cart, checking out, or updating their profile all at once. Each operation is tiny, but the system has to juggle them all simultaneously without dropping a single one. This demands incredibly robust concurrency controls and locking mechanisms to ensure ACID (Atomicity, Consistency, Isolation, Durability) compliance.

On the other hand, OLAP transactions are few but mighty. An analyst might run a single, massive query that scans billions of rows to calculate year-over-year growth. These queries are long, complex, and read-intensive. OLAP systems are optimized to handle a small number of these behemoth queries at the same time, often using columnar storage and massively parallel processing (MPP) to spread the work. The focus isn't on managing thousands of tiny writes but on executing a handful of resource-heavy reads as efficiently as possible. A deep understanding of your data environment is crucial, which is why exploring different types of business intelligence architecture is so important for data leaders.

To get a clearer picture, let's break down the key architectural differences in a side-by-side comparison. The following table highlights how the design principles of OLTP and OLAP diverge to meet their specific goals.

Architectural Deep Dive OLTP vs OLAP Characteristics

Characteristic | OLTP System | OLAP System |

|---|---|---|

Data Latency | Real-time: Data must be current to the millisecond to reflect live operations. | Near real-time to historical: Data is loaded periodically (e.g., hourly or daily), so some latency is expected. |

Concurrency Model | High concurrency: Designed for thousands of simultaneous users and short transactions. | Low concurrency: Designed for a smaller number of users running long, complex queries. |

Data Volume | Gigabytes to low terabytes: Stores current transactional data. | Terabytes to petabytes: Stores vast amounts of historical and aggregated data. |

Query Complexity | Simple, predefined queries: | Complex, ad-hoc queries: Aggregations, joins, and window functions across multiple dimensions. |

Primary Metric | Transactions per second (TPS): Measures the speed and throughput of operations. | Query response time: Measures how quickly complex analytical questions can be answered. |

Ultimately, these architectural decisions aren't made in a vacuum; they are direct responses to solving two very different business problems. An OLTP system’s architecture is fine-tuned to be a bulletproof system of record, while an OLAP system is built to be a powerful engine for discovery.

Real-World Scenarios: Where Each System Shines

It's one thing to talk about architecture, but it’s another to see these systems in action. Theory is great, but practical examples are where the real understanding comes from. OLTP and OLAP systems are both critical, but they solve completely different problems—one powers the day-to-day business, while the other guides its future.

Let's look at some tangible scenarios where OLTP and OLAP aren't just useful, but absolutely foundational to how a business operates and grows. You'll see pretty quickly how picking the right tool for the job directly affects everything from customer satisfaction to long-term strategy.

OLTP: The Engine of Daily Operations

Think of OLTP systems as the tireless workhorses running in the background of almost every digital interaction. They are built for one thing: handling a massive number of small, fast, and precise transactions concurrently. They keep the customer-facing side of the business running smoothly, second by second.

Here are a few classic examples where OLTP is king:

Airline Reservation Systems: When you book a flight online, the system has to check seat availability, process your payment, and confirm your reservation in a split second. This process involves thousands of people doing the same thing at once, and the system can't make a mistake—no double-booking, no payment errors. That’s pure OLTP.

ATM and Banking Transactions: Every time you withdraw cash or transfer money, it's an atomic OLTP transaction. The system ensures your balance is updated instantly and accurately. There’s zero tolerance for error or latency; financial integrity depends on it.

SaaS User Sign-Ups: When a new user creates an account for a service, an OLTP database is what’s handling the request. It writes a new user record, validates their details, and sets up their initial access. The entire goal is a frictionless, real-time onboarding.

In all these situations, the core job is processing a high volume of small, predictable write operations with bulletproof reliability.

OLAP: The Brains Behind Business Strategy

If OLTP systems keep the lights on, OLAP systems are what help you decide where to build the next power plant. They are designed to answer big, complex, open-ended questions by digging through enormous troves of historical data. This is what lets leaders see beyond the daily noise and spot meaningful trends.

Here’s where OLAP systems truly excel:

Marketing Campaign Analysis: A marketing team wants to know which campaigns actually worked. Using an OLAP system, they can analyze years of customer data, segmenting users by lifetime value, purchase frequency, or location. They can slice and dice sales data across dozens of dimensions—region, time, customer cohort—to measure real ROI and plan the next move.

Product Engagement Funnels: A product manager sees users dropping off during the onboarding process and needs to know why. An OLAP system can analyze behavioral data from millions of sessions to pinpoint the exact step where users get stuck. This isn't about one user; it's about finding patterns in the aggregate to justify a design change.

Supply Chain Optimization: A major retailer needs to stock its shelves for the holidays. By analyzing historical sales, inventory levels, supplier performance, and seasonal trends, an OLAP system can help forecast demand with incredible accuracy. This kind of complex, multi-faceted analysis is simply impossible on a transactional system.

The fundamental difference comes down to the question. OLTP answers, "What is happening right now?" by recording a single event. OLAP answers, "Why is this happening, and what should we do next?" by analyzing millions of past events.

These examples make it clear that OLTP and OLAP aren't competitors. They’re partners. You can see more detailed examples of how different teams are using their data in these self-service analytics use cases. Knowing when and where to apply each is the hallmark of a data-mature organization.

How To Choose Your Data Strategy OLTP OLAP Or HTAP

Choosing between an OLTP or OLAP architecture is more than just a technical decision—it’s a foundational business move. The path you take will shape how your company operates day-to-day and how it plans for the future. The right strategy really boils down to your immediate needs, long-term goals, and overall data maturity.

For any early-stage company or a brand-new product launch, a pure OLTP system is non-negotiable. It’s the engine that powers your application, reliably processing all those critical transactions like user sign-ups, purchases, and content updates. At this stage, your entire focus is on operational stability and a smooth user experience, which is precisely what OLTP is engineered for.

But as your business scales, relying solely on OLTP starts to become a strategic blind spot. You're sitting on a growing pile of historical data that holds the keys to understanding your business. This is the inflection point where a dedicated OLAP system becomes essential, allowing you to analyze trends and customer behavior to make informed decisions without dragging down the performance of your live application.

The Rise Of The Hybrid Approach HTAP

What if you could close the gap between real-time operations and instant analytics? That’s the core promise of Hybrid Transactional/Analytical Processing (HTAP). HTAP systems are built to handle both high-throughput transactions and complex analytical queries from a single data source, aiming to eliminate the traditional lag caused by ETL (Extract, Transform, Load) pipelines.

This approach gives businesses the power to run analytics directly on live, operational data. Think of a fraud detection system that analyzes a transaction against historical patterns in milliseconds, or an e-commerce platform that adjusts pricing on the fly based on current demand. HTAP makes these kinds of powerful, immediate insights possible.

However, HTAP isn't a silver bullet. These systems can be more complex to manage and often come with a higher price tag than a traditional separated architecture. The decision to go with HTAP really hangs on one critical question: how valuable are real-time analytical insights to your core business operations? If the answer is "very," the investment might be well worth it.

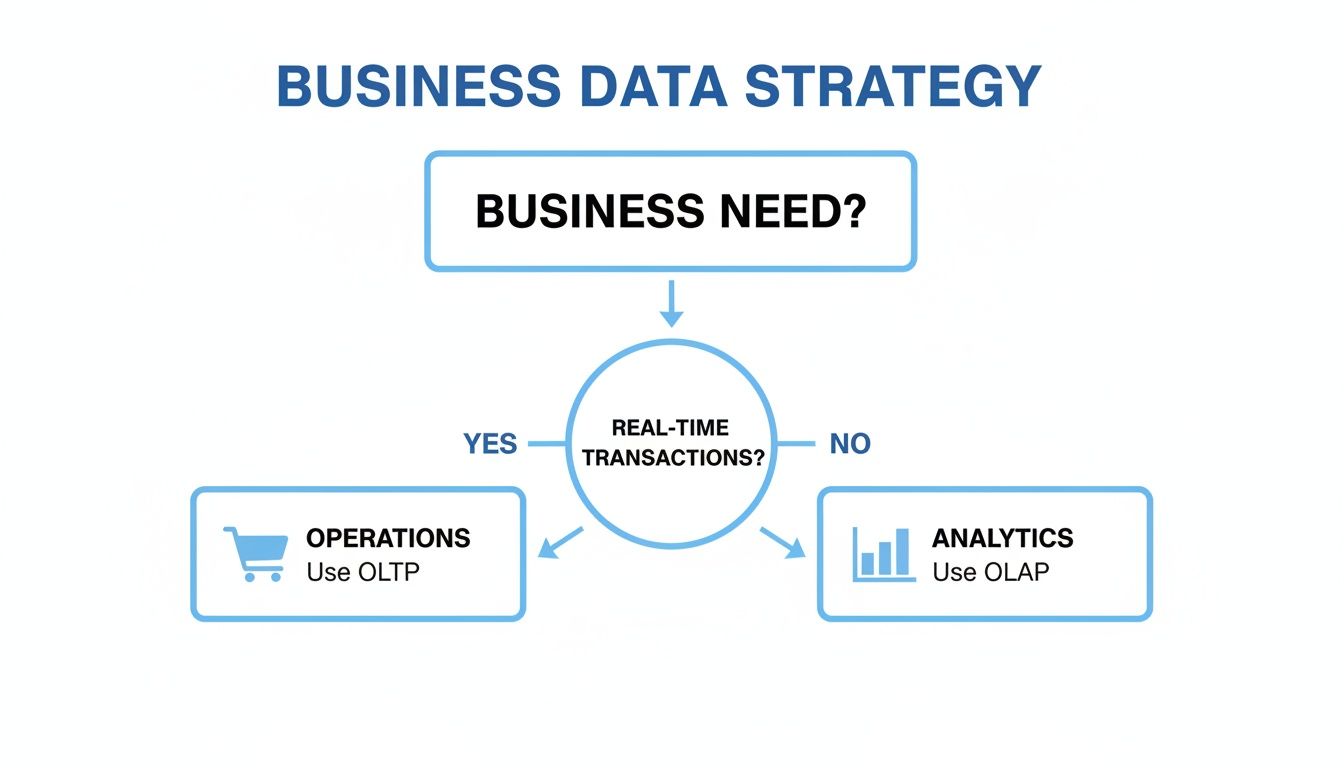

This flowchart can help visualize the primary decision point based on your core business need, guiding you toward an OLTP or OLAP focus.

As the diagram shows, it’s a straightforward choice at its core: if you're running daily operations, OLTP is your starting point. If you need strategic analysis, OLAP is where you're headed.

Making The Right Decision For Your Team

Choosing your data strategy means taking a hard look at where your company is now and where you want it to be. A purely transactional OLTP system is perfect for getting a new venture off the ground, but you'll almost certainly need the analytical power of OLAP as you grow.

Here’s a practical framework to guide your decision-making:

Startup/New Product: Begin with a robust OLTP database. Your focus should be 100% on operational excellence and application performance. Don't overcomplicate it.

Growth-Stage Company: Keep your OLTP system for operations, but it's time to build a separate OLAP data warehouse. This separation is key—it protects your app’s performance while unlocking the deep analytical capabilities you need to grow intelligently.

Data-Driven Enterprise: For organizations where real-time analytics create a significant competitive edge (like dynamic pricing or instant personalization), exploring an HTAP solution becomes a strategic imperative.

The modern challenge isn't just picking OLTP or OLAP; it's about architecting a system that lets both thrive. The goal is to guarantee operational integrity while making data seamlessly available for analysis, whether that’s through traditional pipelines or a unified HTAP platform.

Modern tools are also helping to bridge this divide. For teams using Querio, this means moving beyond manual silos. AI agents grounded in your data model can handle OLTP-driven real-time needs or complex OLAP analyses, centralizing all insights into shared Boards. This allows product managers to track OLTP-fueled growth metrics while analysts standardize reporting, all within one secure environment.

Ultimately, the best data architecture is one that evolves with your business. As you build out your capabilities, it's helpful to understand all the components of a robust data ecosystem. You might be interested in our deep dive into the modern data stack to see how all these pieces fit together.

Integrating Analytics Tools With Your Data Systems

Choosing the right database architecture is a huge first step, but the real value comes from making that data accessible and actionable for your teams. The bridge between your operational OLTP systems and your analytical OLAP environment is where raw numbers become strategic insights. This integration is what separates companies that simply record transactions from those that use data to drive genuine business intelligence.

The most common way to do this is by building a data pipeline to move information from its source to a system built for analysis. You absolutely need this because querying a live OLTP database for analytics can crush its performance, slowing down the very applications your customers and employees rely on every day.

The Role Of ETL And ELT Pipelines

To move data safely, teams have long relied on the ETL (Extract, Transform, Load) process. This classic approach involves pulling data from an OLTP source, cleaning and restructuring it on a separate server, and then loading the pristine, analysis-ready data into an OLAP data warehouse.

But with the rise of incredibly powerful cloud data warehouses like Snowflake and BigQuery, a more modern pattern, ELT (Extract, Load, Transform), has taken over. Here, you load raw data directly into the warehouse first and handle all the transformations inside the warehouse itself. It's a much faster and more flexible model that leverages the massive processing power these platforms offer.

ETL (Extract, Transform, Load): The traditional method where data is cleaned and structured before landing in the data warehouse. This ensures only high-quality, pre-defined data enters your analytical environment.

ELT (Extract, Load, Transform): The modern approach where raw data is loaded first, and transformations happen later inside the data warehouse. This gives you much more speed and scalability, especially with large, messy datasets.

No matter which pattern you choose, the goal is the same: create a reliable, automated flow of information from your operational systems to your analytical ones.

Connecting Modern Analytics Platforms

Once your data is sitting in an OLAP warehouse, you need a way for people to actually use it. This is where a business intelligence (BI) platform comes in. Modern analytics tools plug directly into these warehouses, offering an intuitive interface that makes data accessible to everyone.

This means product managers, marketing leads, and executives can explore data and find answers themselves, without having to write a single line of SQL. These platforms act as a powerful visual layer on top of your OLAP system, letting teams build interactive dashboards, spot trends, and run complex analyses with just a few clicks. It’s a game-changer that frees up your data team from an endless queue of reporting requests.

The real power of an OLAP system isn't just in storing massive amounts of data; it's in making that data easily explorable for everyone. A well-integrated analytics tool turns a static data repository into a dynamic engine for discovery and decision-making.

Driving Product Growth With Embedded Analytics

For product teams, the integration can go even deeper with embedded analytics. This is where you bring BI capabilities directly into your own application, turning data insights into a core feature for your customers. Think of a SaaS tool that gives users their own personalized performance dashboards or an "Ask your data" feature powered by AI.

This is done by using SDKs and secure embedding to place white-label charts and dashboards right into your product’s UI. Analytics stops being just an internal tool and becomes a powerful, customer-facing value proposition that drives engagement and retention.

Of course, building customer-facing data features means you have to be obsessed with security. Key considerations here are non-negotiable:

Multi-tenant Isolation: Ensuring that one customer can never, under any circumstances, see another customer's data.

Row-Level Security: Implementing strict rules that filter data based on user roles and permissions, so people only see exactly what they're authorized to see.

Compliance: Adhering to standards like SOC 2 to guarantee your data handling practices meet tough, independently audited security protocols.

OLTP vs. OLAP: Your Questions Answered

When you're in the trenches building a data architecture, the theoretical differences between OLTP and OLAP quickly turn into practical questions. Let's break down some of the most common ones that come up for product and data teams.

Can I Just Run Analytical Queries on My Production (OLTP) Database?

You technically can, but it's a really bad idea. Think of it like trying to do a deep clean of your kitchen in the middle of a dinner party—it’s going to cause chaos.

Your OLTP database is optimized for quick, small, individual transactions, ensuring your application runs smoothly for users. When you throw a heavy analytical query at it, you're asking it to scan massive amounts of data, which can lock tables, hog resources, and grind your entire application to a halt. The result? Timeouts, errors, and a terrible user experience. The best practice, and the one that will let you sleep at night, is to move that data to a dedicated OLAP system for analysis.

The risk to live operations is just too high. A single, poorly written analytical query hitting a production database can disrupt thousands of customer transactions. That's why separating these workloads is a non-negotiable rule in modern data architecture.

What’s the Point of a Data Warehouse in All This?

The data warehouse is the star of your OLAP strategy. It’s a central hub built specifically for analysis, pulling in data from all your OLTP databases and other sources like CRMs or marketing tools.

Through a process known as ETL (Extract, Transform, Load) or ELT, the data warehouse cleans, reshapes, and organizes all this information. It creates a stable, reliable source of truth for historical analysis. In short, it’s the bridge that connects your day-to-day operations with your big-picture business intelligence, letting your team explore data freely without ever putting the production systems at risk.

How Does HTAP Fit into the OLTP vs. OLAP Picture?

Hybrid Transactional/Analytical Processing (HTAP) systems are designed to break down the wall between OLTP and OLAP. Their whole purpose is to let you run real-time analytics directly on live transactional data, cutting out the delay that comes with moving data through a pipeline.

Using smart designs like in-memory computing and columnar storage, HTAP databases can handle both high-speed transactions and complex queries from a single system. This is a huge deal for situations where you need instant insights, such as:

Real-time Fraud Detection: Analyzing a transaction for suspicious patterns the second it happens.

Dynamic Pricing: Instantly adjusting prices based on live demand and inventory data.

Instant Personalization: Customizing a user’s experience based on their very last click.

HTAP essentially gives you the best of both worlds, but it's a sophisticated solution that can come with higher costs and complexity than a traditional, separated setup.

What Are the First Steps to Building a Proper OLAP System?

Getting started with OLAP is less about picking a technology and more about defining your goals. A good plan will help you deliver value fast without getting lost in the weeds.

Here’s a practical roadmap to get you going:

Define Your Business Questions: Before anything else, figure out what you need to know. What are the key metrics that drive your business? This focus will guide all your technical decisions.

Pick a Cloud Data Warehouse: Choose a platform that fits your scale and budget. Options like Snowflake, Google BigQuery, or Amazon Redshift are powerful and scalable without requiring a massive upfront investment.

Set Up a Data Pipeline: You need a way to get data from your OLTP sources into your new warehouse. Build an ETL or ELT process, and use modern tools to automate as much of it as you can.

Start with a Small Win: Don’t try to move everything at once. Pick a single, high-impact dataset for a proof-of-concept. This lets you show value quickly and learn from a manageable project.

Connect Your Analytics Tool: Once the data is flowing, plug in your business intelligence platform. This is where your team can finally start building dashboards and finding insights, proving the value of the entire setup.

At Querio, we empower every team to move from complex data to clear decisions instantly. Our AI-powered analytics platform connects directly to your data warehouse, allowing anyone to ask natural-language questions, create visualizations, and uncover insights without writing a single line of code.

Ready to make data accessible to everyone? Learn more about Querio.